0 Σχόλια

0 Μοιράστηκε

Κατάλογος

Κατάλογος

-

Παρακαλούμε συνδέσου στην Κοινότητά μας για να δηλώσεις τι σου αρέσει, να σχολιάσεις και να μοιραστείς με τους φίλους σου!

-

WWW.LIVESCIENCE.COM230 million-year-old dinosaur is oldest ever discovered in North America and changes what we know about how they conquered EarthA newfound "chicken-size" dinosaur, recently unearthed in Wyoming, changes what paleontologists thought they knew about how dinosaurs spread across the globe.0 Σχόλια 0 Μοιράστηκε

WWW.LIVESCIENCE.COM230 million-year-old dinosaur is oldest ever discovered in North America and changes what we know about how they conquered EarthA newfound "chicken-size" dinosaur, recently unearthed in Wyoming, changes what paleontologists thought they knew about how dinosaurs spread across the globe.0 Σχόλια 0 Μοιράστηκε -

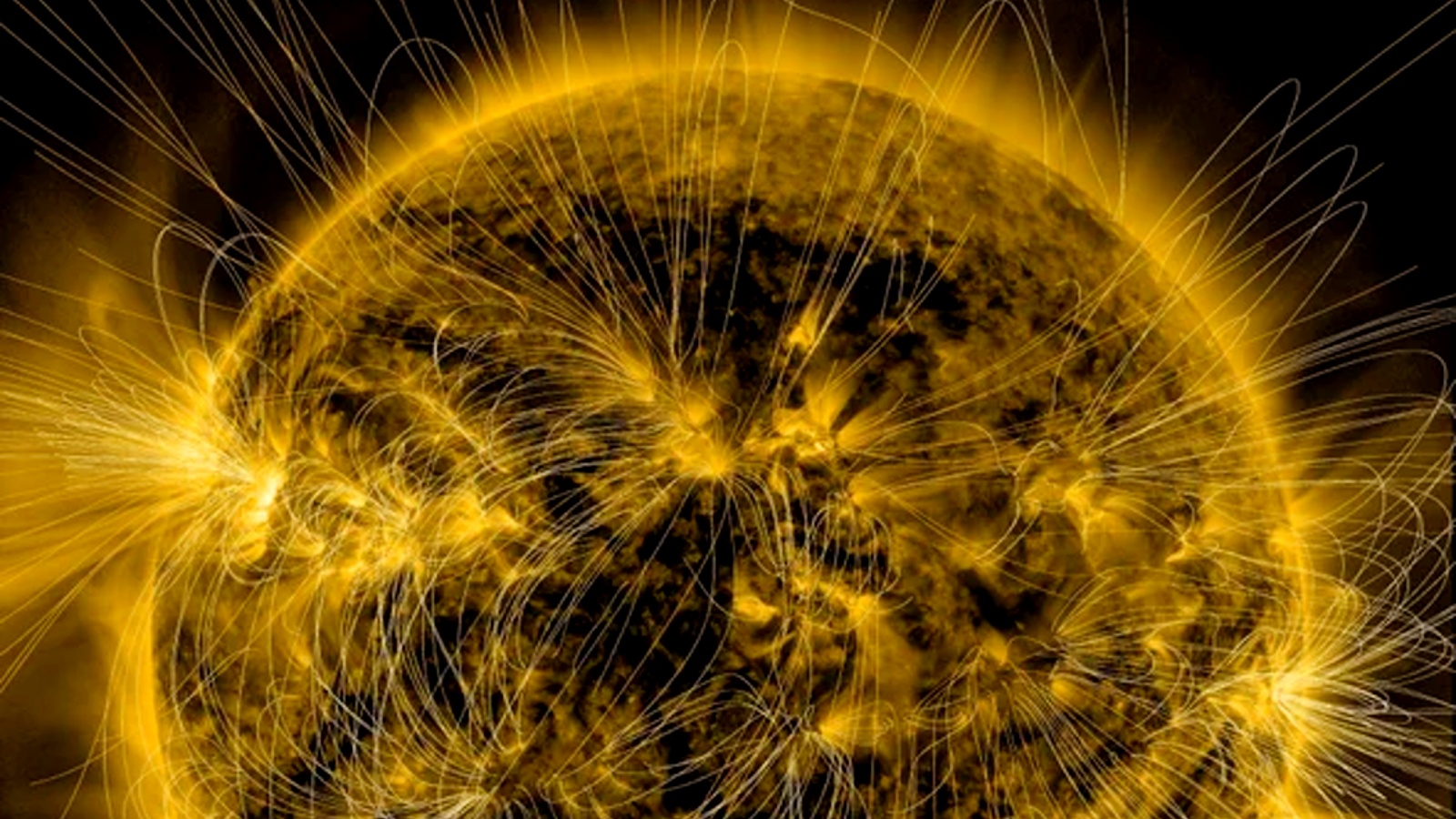

WWW.LIVESCIENCE.COMX-class solar flares hit a new record in 2024 and could spike further this year but the sun isn't entirely to blame, experts sayThere were significantly more X-class solar flares in 2024 than any other year for at least three decades. The arrival of solar maximum was a key reason for the spike, but other factors were also at play.0 Σχόλια 0 Μοιράστηκε

WWW.LIVESCIENCE.COMX-class solar flares hit a new record in 2024 and could spike further this year but the sun isn't entirely to blame, experts sayThere were significantly more X-class solar flares in 2024 than any other year for at least three decades. The arrival of solar maximum was a key reason for the spike, but other factors were also at play.0 Σχόλια 0 Μοιράστηκε -

WWW.LIVESCIENCE.COMPassenger plane with entirely new 'blended wing' shape aims to hit the skies by 2030A new type of passenger plane will adopt a design that blends wings into the aircraft's body, which its creators say will cut fuel consumption by 50% and reduce noise.0 Σχόλια 0 Μοιράστηκε

WWW.LIVESCIENCE.COMPassenger plane with entirely new 'blended wing' shape aims to hit the skies by 2030A new type of passenger plane will adopt a design that blends wings into the aircraft's body, which its creators say will cut fuel consumption by 50% and reduce noise.0 Σχόλια 0 Μοιράστηκε -

WWW.GAMESPOT.COMMarvel Rivals Season 1 Battle Pass - All Skins, Emotes, And Other RewardsMarvel Rivals Season 1 has arrived, after the shortened Season 0 kicked off the launch of the 6v6 hero shooter. Season 1 adds two new heroes to its roster of popular characters, Mr. Fantastic (Duelist) and Invisible Woman (Strategist). Those two are available at the start of the season, while the rest of the Fantastic Four, Human Torch (Duelist) and The Thing (Vanguard), will join as part of the midseason update, which should come about 45 days from the start of Season 1.The lore of Season 1 revolves around the Fantastic Four fighting to save New York from Dracula, who has started the Eternal Night and taken control of the city. The new maps take place in this version of New York and many of the cosmetics in the Season 1 battle pass are themed around this plot, giving characters Van Helsing-like makeovers.The Season 1 battle pass features 65 items, including 10 skins, MVP highlight intros, and currency. The Season 1 battle pass costs 950 Lattice, which is just under $10, but the premium version does contain 600 Lattice and 600 Units, with Units usable in the cosmetic store.A few highlights from the skins in the battle pass include the All-Butcher Loki, Bounty Hunter Rocket Raccoon, Blood Edge Armor Iron Man, and Blood Berserker Wolverine. All of these give these heroes vampire-hunting costumes, although some of the other costumes aren't part of the theme. As previously confirmed, Marvel Rivals battle passes don't expire, so you can continue to unlock the items you paid for even after the season ends. Below, you can see every item included in the Marvel Rivals Season 1 Battle Pass: Darkhold.0 Σχόλια 0 Μοιράστηκε

WWW.GAMESPOT.COMMarvel Rivals Season 1 Battle Pass - All Skins, Emotes, And Other RewardsMarvel Rivals Season 1 has arrived, after the shortened Season 0 kicked off the launch of the 6v6 hero shooter. Season 1 adds two new heroes to its roster of popular characters, Mr. Fantastic (Duelist) and Invisible Woman (Strategist). Those two are available at the start of the season, while the rest of the Fantastic Four, Human Torch (Duelist) and The Thing (Vanguard), will join as part of the midseason update, which should come about 45 days from the start of Season 1.The lore of Season 1 revolves around the Fantastic Four fighting to save New York from Dracula, who has started the Eternal Night and taken control of the city. The new maps take place in this version of New York and many of the cosmetics in the Season 1 battle pass are themed around this plot, giving characters Van Helsing-like makeovers.The Season 1 battle pass features 65 items, including 10 skins, MVP highlight intros, and currency. The Season 1 battle pass costs 950 Lattice, which is just under $10, but the premium version does contain 600 Lattice and 600 Units, with Units usable in the cosmetic store.A few highlights from the skins in the battle pass include the All-Butcher Loki, Bounty Hunter Rocket Raccoon, Blood Edge Armor Iron Man, and Blood Berserker Wolverine. All of these give these heroes vampire-hunting costumes, although some of the other costumes aren't part of the theme. As previously confirmed, Marvel Rivals battle passes don't expire, so you can continue to unlock the items you paid for even after the season ends. Below, you can see every item included in the Marvel Rivals Season 1 Battle Pass: Darkhold.0 Σχόλια 0 Μοιράστηκε -

WWW.GAMESPOT.COMAssassin's Creed Shadows Expansion Leak Details Over 10 Hours Of GameplayDetails about an expansion for Assassin's Creed Shadows has leaked on the game's Steam page, which has now been scrubbed of this information.According to Insider Gaming, the expansion is called Claws of Awaji and it features an additional 10 hours of gameplay. Players will be able to travel to a new location and unlock a new weapon type, as well as skills, gears, and abilities. Other that that, there's no other info regarding what the expansion's story is.Assassin's Creed Shadows was originally planned for a release date of February 14, but was delayed once again to March 20. The decision was made in order to further improve its gameplay quality and performance.Continue Reading at GameSpot0 Σχόλια 0 Μοιράστηκε

WWW.GAMESPOT.COMAssassin's Creed Shadows Expansion Leak Details Over 10 Hours Of GameplayDetails about an expansion for Assassin's Creed Shadows has leaked on the game's Steam page, which has now been scrubbed of this information.According to Insider Gaming, the expansion is called Claws of Awaji and it features an additional 10 hours of gameplay. Players will be able to travel to a new location and unlock a new weapon type, as well as skills, gears, and abilities. Other that that, there's no other info regarding what the expansion's story is.Assassin's Creed Shadows was originally planned for a release date of February 14, but was delayed once again to March 20. The decision was made in order to further improve its gameplay quality and performance.Continue Reading at GameSpot0 Σχόλια 0 Μοιράστηκε -

WWW.GAMESPOT.COMThe First Berserker: Khazan Demo Launching Soon, Two Months Ahead Of ReleasePublisher Nexon has announced that The First Berserker: Khazan is getting a demo on January 16, and players will be able to spend as much time as they want in it.The game's official Twitter account posted that the demo will go live on PC, PlayStation, and Xbox at 10 AM ET / 7 AM PT with "no end date scheduled." Presumably, this means that the demo will stay up for the foreseeable future for players to check out.The First Berserker: Khazan is an action-RPG and the next entry in the Dungeon & Fighter series. It's set 800 years before the events of the series, and follows Khazan, who is falsely accused of treason and subsequently banished to the snowy mountains before going on a quest for vengeance. The game features Ben Starr, who voiced Final Fantasy XVI's main protagonist Clive Rosfield, as the voice of Khazan.Continue Reading at GameSpot0 Σχόλια 0 Μοιράστηκε

WWW.GAMESPOT.COMThe First Berserker: Khazan Demo Launching Soon, Two Months Ahead Of ReleasePublisher Nexon has announced that The First Berserker: Khazan is getting a demo on January 16, and players will be able to spend as much time as they want in it.The game's official Twitter account posted that the demo will go live on PC, PlayStation, and Xbox at 10 AM ET / 7 AM PT with "no end date scheduled." Presumably, this means that the demo will stay up for the foreseeable future for players to check out.The First Berserker: Khazan is an action-RPG and the next entry in the Dungeon & Fighter series. It's set 800 years before the events of the series, and follows Khazan, who is falsely accused of treason and subsequently banished to the snowy mountains before going on a quest for vengeance. The game features Ben Starr, who voiced Final Fantasy XVI's main protagonist Clive Rosfield, as the voice of Khazan.Continue Reading at GameSpot0 Σχόλια 0 Μοιράστηκε -

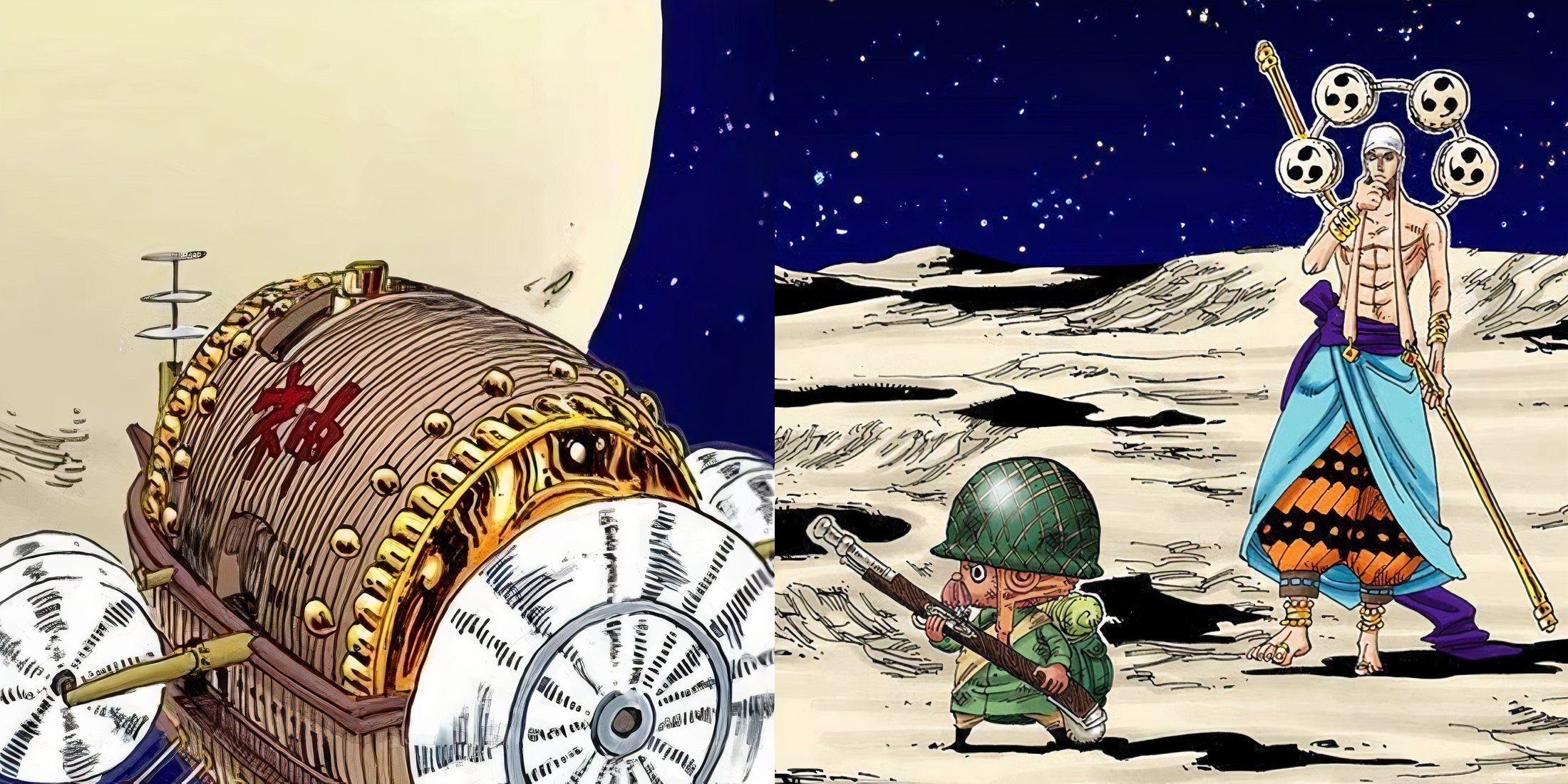

GAMERANT.COMOne Piece: This Cover Story Should Have Been AnimatedDue to the massive amount of content and side stories the series has, One Piece has developed an excellent world. Featuring tons of moving parts, various factions, and excellent characters, many consider One Piece to be an amazing example of world-building. Though this level of detail has made the series itself extremely long, in the end, hopefully, it will all pay off in the Final Saga.0 Σχόλια 0 Μοιράστηκε

GAMERANT.COMOne Piece: This Cover Story Should Have Been AnimatedDue to the massive amount of content and side stories the series has, One Piece has developed an excellent world. Featuring tons of moving parts, various factions, and excellent characters, many consider One Piece to be an amazing example of world-building. Though this level of detail has made the series itself extremely long, in the end, hopefully, it will all pay off in the Final Saga.0 Σχόλια 0 Μοιράστηκε -

GAMERANT.COMHuman Within Developer Talks Making Choices MatterSignal Space Lab's Human Within straddles the line between virtual reality games and interactive fiction, smoothly shifting from one format to another in a unique way that has yet to be seen on the platform. Its traditional virtual reality moments contain most of the game's interactivity, while the 360-degree live-action scenes host some thought-provoking decision-making.0 Σχόλια 0 Μοιράστηκε

GAMERANT.COMHuman Within Developer Talks Making Choices MatterSignal Space Lab's Human Within straddles the line between virtual reality games and interactive fiction, smoothly shifting from one format to another in a unique way that has yet to be seen on the platform. Its traditional virtual reality moments contain most of the game's interactivity, while the 360-degree live-action scenes host some thought-provoking decision-making.0 Σχόλια 0 Μοιράστηκε -

GAMERANT.COMFollowing an Animal Crossing Trend Would Benefit Haunted ChocolatierWhen it comes to cozy gaming, Stardew Valley is the holy grail, and Haunted Chocolatier is looking to continue this tradition of excellence. Being developer Eric Barone's second game, audiences can expect Haunted Chocolatier to take some greater risks than its predecessor, sticking to many of its core mechanics while iterating upon them in unique ways.0 Σχόλια 0 Μοιράστηκε

GAMERANT.COMFollowing an Animal Crossing Trend Would Benefit Haunted ChocolatierWhen it comes to cozy gaming, Stardew Valley is the holy grail, and Haunted Chocolatier is looking to continue this tradition of excellence. Being developer Eric Barone's second game, audiences can expect Haunted Chocolatier to take some greater risks than its predecessor, sticking to many of its core mechanics while iterating upon them in unique ways.0 Σχόλια 0 Μοιράστηκε