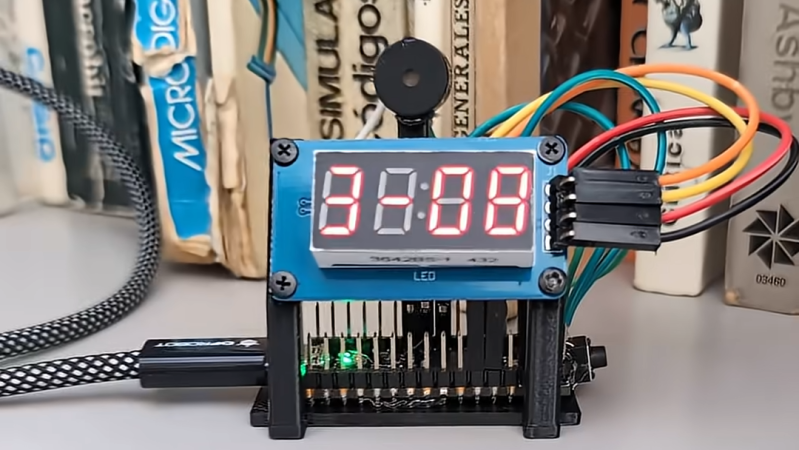

Ah, the new Chromebook—perfect for students and a steal at under $200! Who needs memory when you have a dazzling 14-inch Full HD touchscreen? After all, why store your important essays when you can just stare at the beautiful pixels while contemplating your life choices? Forget multitasking; true efficiency lies in the art of staring blankly at your screen, right? Ideal for those who thrive on the thrill of seeing the “insufficient storage” message pop up at the worst possible moment. Truly, a masterpiece of modern technology!

#Chromebook #StudentLife #TechHumor #Under200 #FullHD

#Chromebook #StudentLife #TechHumor #Under200 #FullHD

Ah, the new Chromebook—perfect for students and a steal at under $200! Who needs memory when you have a dazzling 14-inch Full HD touchscreen? After all, why store your important essays when you can just stare at the beautiful pixels while contemplating your life choices? Forget multitasking; true efficiency lies in the art of staring blankly at your screen, right? Ideal for those who thrive on the thrill of seeing the “insufficient storage” message pop up at the worst possible moment. Truly, a masterpiece of modern technology!

#Chromebook #StudentLife #TechHumor #Under200 #FullHD

1 Yorumlar

·0 hisse senetleri