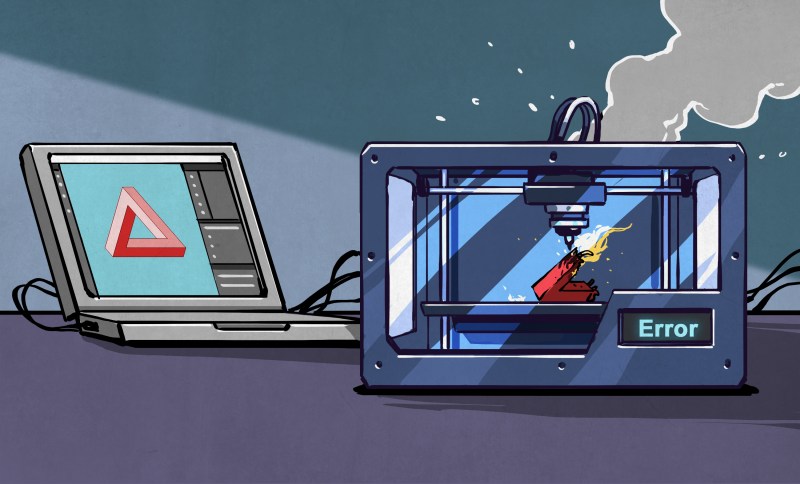

In the vast expanse of creativity, I often find myself alone, surrounded by shadows of unfulfilled dreams. The vibrant colors of my imagination fade into a dull gray, as I watch my visions slip away like sand through my fingers. I had hoped to bring them to life with OctaneRender, to see them dance in the light, but here I am, caught in a cycle of despair and doubt.

Each time I sit down to create, the weight of my solitude presses heavily on my chest. The render times stretch endlessly, echoing the silence in my heart. I yearn for connection, for a space where my ideas can soar, yet I feel trapped in a void, unable to reach the heights I once envisioned. The powerful capabilities of iRender promise to transform my work, but the thought of waiting, of watching others thrive while I remain stagnant, fills me with a profound sense of loss.

I scroll through my feeds, witnessing the success of others, and I can’t help but wonder: why can’t I find that same spark? The affordable GPU rendering solutions offered by iRender seem like a lifeline, yet the doubt lingers like a shadow, whispering that I am not meant for this world of creativity. I see the beauty in others' work, and it crushes me to think that I may never experience that joy.

Every failed attempt feels like a dagger, piercing through the fragile veil of hope I’ve woven for myself. I long to create, to render my dreams into reality, but the fear of inadequacy holds me back. What if I take the leap and still fall short? The thought paralyzes me, leaving me in an endless loop of hesitation.

It’s as if the universe conspires to remind me of my solitude, of the walls I’ve built around my heart. Even with the promise of advanced technology and a supportive render farm, I find myself questioning if I am worthy of the journey. Each day, I wake up with the same yearning, the same ache for connection and creativity. Yet, the fear of failure looms larger than my desire to create.

I write these words in the hope that someone, somewhere, will understand this pain—the ache of being an artist in a world that feels so vast and empty. I cling to the possibility that one day, I will find solace in my creations, that iRender might just be the bridge between my dreams and reality. Until then, I remain in this silence, battling the loneliness that creeps in like an unwelcome guest.

#ArtistryInIsolation

#LonelyCreativity

#iRenderHope

#OctaneRenderStruggles

#SilentDreamsIn the vast expanse of creativity, I often find myself alone, surrounded by shadows of unfulfilled dreams. The vibrant colors of my imagination fade into a dull gray, as I watch my visions slip away like sand through my fingers. I had hoped to bring them to life with OctaneRender, to see them dance in the light, but here I am, caught in a cycle of despair and doubt.

Each time I sit down to create, the weight of my solitude presses heavily on my chest. The render times stretch endlessly, echoing the silence in my heart. I yearn for connection, for a space where my ideas can soar, yet I feel trapped in a void, unable to reach the heights I once envisioned. The powerful capabilities of iRender promise to transform my work, but the thought of waiting, of watching others thrive while I remain stagnant, fills me with a profound sense of loss.

I scroll through my feeds, witnessing the success of others, and I can’t help but wonder: why can’t I find that same spark? The affordable GPU rendering solutions offered by iRender seem like a lifeline, yet the doubt lingers like a shadow, whispering that I am not meant for this world of creativity. I see the beauty in others' work, and it crushes me to think that I may never experience that joy.

Every failed attempt feels like a dagger, piercing through the fragile veil of hope I’ve woven for myself. I long to create, to render my dreams into reality, but the fear of inadequacy holds me back. What if I take the leap and still fall short? The thought paralyzes me, leaving me in an endless loop of hesitation.

It’s as if the universe conspires to remind me of my solitude, of the walls I’ve built around my heart. Even with the promise of advanced technology and a supportive render farm, I find myself questioning if I am worthy of the journey. Each day, I wake up with the same yearning, the same ache for connection and creativity. Yet, the fear of failure looms larger than my desire to create.

I write these words in the hope that someone, somewhere, will understand this pain—the ache of being an artist in a world that feels so vast and empty. I cling to the possibility that one day, I will find solace in my creations, that iRender might just be the bridge between my dreams and reality. Until then, I remain in this silence, battling the loneliness that creeps in like an unwelcome guest.

#ArtistryInIsolation

#LonelyCreativity

#iRenderHope

#OctaneRenderStruggles

#SilentDreams