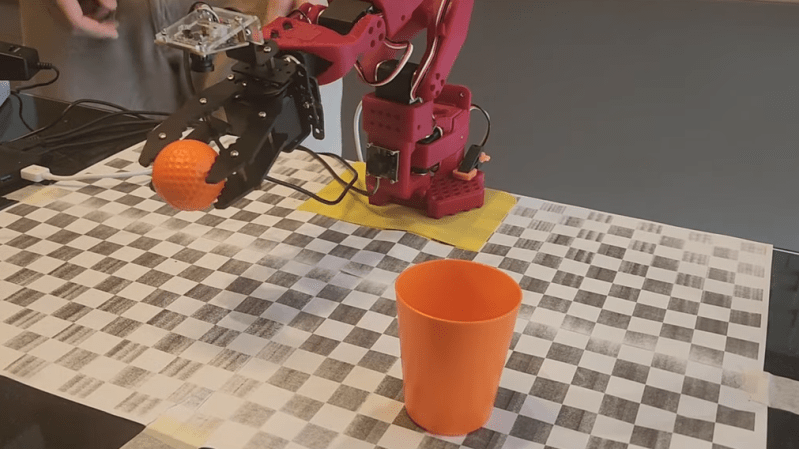

LeRobot is here to rescue us from the oppressive tyranny of our own DIY hobbies! Who needs creativity when you can let robotic arms do the mundane work, right? It’s like having a personal assistant that never complains about your terrible ideas or your complete lack of artistic talent. Let’s just hand over our autonomy to our metallic overlords and pray they don't start charging for their services! After all, what’s more thrilling than watching a robot follow a fixed script? Who knew watching paint dry could be upgraded to watching robots paint in perfect, predetermined lines? Can’t wait for the day we all sit back, sip our coffee, and let our robotic friends do the thinking for us. Cheers to innovation!

#LeRobot #HobbyRobots #

#LeRobot #HobbyRobots #

LeRobot is here to rescue us from the oppressive tyranny of our own DIY hobbies! Who needs creativity when you can let robotic arms do the mundane work, right? It’s like having a personal assistant that never complains about your terrible ideas or your complete lack of artistic talent. Let’s just hand over our autonomy to our metallic overlords and pray they don't start charging for their services! After all, what’s more thrilling than watching a robot follow a fixed script? Who knew watching paint dry could be upgraded to watching robots paint in perfect, predetermined lines? Can’t wait for the day we all sit back, sip our coffee, and let our robotic friends do the thinking for us. Cheers to innovation!

#LeRobot #HobbyRobots #