Mock up a website in five prompts

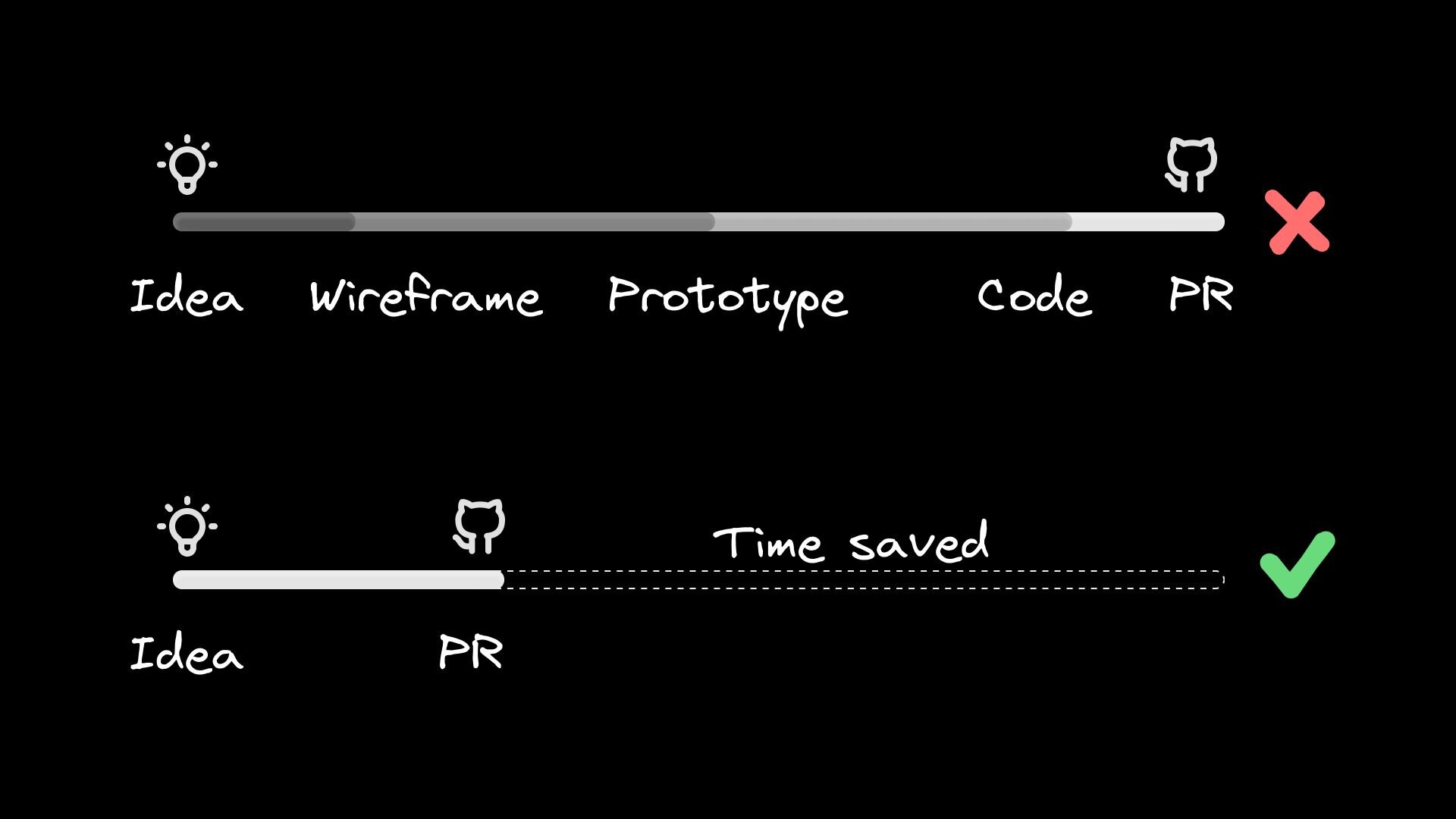

“Wait, can users actually add products to the cart?”Every prototype faces that question or one like it. You start to explain it’s “just Figma,” “just dummy data,” but what if you didn’t need disclaimers?What if you could hand clients—or your team—a working, data-connected mock-up of their website, or new pages and components, in less time than it takes to wireframe?That’s the challenge we’ll tackle today. But first, we need to look at:The problem with today’s prototyping toolsPick two: speed, flexibility, or interactivity.The prototyping ecosystem, despite having amazing software that addresses a huge variety of needs, doesn’t really have one tool that gives you all three.Wireframing apps let you draw boxes in minutes but every button is fake. Drag-and-drop builders animate scroll triggers until you ask for anything off-template. Custom code frees you… after you wave goodbye to a few afternoons.AI tools haven’t smashed the trade-off; they’ve just dressed it in flashier costumes. One prompt births a landing page, the next dumps a 2,000-line, worse-than-junior-level React file in your lap. The bottleneck is still there. Builder’s approach to website mockupsWe’ve been trying something a little different to maintain speed, flexibility, and interactivity while mocking full websites. Our AI-driven visual editor:Spins up a repo in seconds or connects to your existing one to use the code as design inspiration. React, Vue, Angular, and Svelte all work out of the box.

Lets you shape components via plain English, visual edits, copy/pasted Figma frames, web inspos, MCP tools, and constant visual awareness of your entire website.

Commits each change as a clean GitHub pull request your team can review like hand-written code. All your usual CI checks and lint rules apply.And if you need a tweak, you can comment to @builderio-bot right in the GitHub PR to make asynchronous changes without context switching.This results in a live site the café owner can interact with today, and a branch your devs can merge tomorrow. Stakeholders get to click actual buttons and trigger real state—no more “so, just imagine this works” demos.Let’s see it in action.From blank canvas to working mockup in five promptsToday, I’m going to mock up a fake business website. You’re welcome to create a real one.Before we fire off a single prompt, grab a note and write:Business name & vibe

Core pages

Primary goal

Brand palette & toneThat’s it. Don’t sweat the details—we can always iterate. For mine, I wrote:1. Sunny Trails Bakery — family-owned, feel-good, smells like warm cinnamon.

2. Home, About, Pricing / Subscription Box, Menu.

3. Drive online orders and foot traffic—every CTA should funnel toward “Order Now” or “Reserve a Table.”

4. Warm yellow, chocolate brown, rounded typography, playful copy.We’re not trying to fit everything here. What matters is clarity on what we’re creating, so the AI has enough context to produce usable scaffolds, and so later tweaks stay aligned with the client’s vision. Builder will default to using React, Vite, and Tailwind. If you want a different JS framework, you can link an existing repo in that stack. In the near future, you won’t need to do this extra step to get non-React frameworks to function.An entire website from the first promptNow, we’re ready to get going.Head over to Builder.io and paste in this prompt or your own:Create a cozy bakery website called “Sunny Trails Bakery” with pages for:

• Home

• About

• Pricing

• Menu

Brand palette: warm yellow and chocolate brown. Tone: playful, inviting. The restaurant is family-owned, feel-good, and smells like cinnamon.

The goal of this site is to drive online orders and foot traffic—every CTA should funnel toward "Order Now" or "Reserve a Table."Once you hit enter, Builder will spin up a new dev container, and then inside that container, the AI will build out the first version of your site. You can leave the page and come back when it’s done.Now, before we go further, let’s create our repo, so that we get version history right from the outset. Click “Create Repo” up in the top right, and link your GitHub account.Once the process is complete, you’ll have a brand new repo.If you need any help on this step, or any of the below, check out these docs.Making the mockup’s order system workFrom our one-shot prompt, we’ve already got a really nice start for our client. However, when we press the “Order Now” button, we just get a generic alert. Let’s fix this.The best part about connecting to GitHub is that we get version control. Head back to your dashboard and edit the settings of your new project. We can give it a better name, and then, in the “Advanced” section, we can change the “Commit Mode” to “Pull Requests.”Now, we have the ability to create new branches right within Builder, allowing us to make drastic changes without worrying about the main version. This is also helpful if you’d like to show your client or team a few different versions of the same prototype.On a new branch, I’ll write another short prompt:Can you make the "Order Now" button work, even if it's just with dummy JSON for now?As you can see in the GIF above, Builder creates an ordering system and a fully mobile-responsive cart and checkout flow.Now, we can click “Send PR” in the top right, and we have an ordinary GitHub PR that can be reviewed and merged as needed.This is what’s possible in two prompts. For our third, let’s gussy up the style.If you’re like me, you might spend a lot of time admiring other people’s cool designs and learning how to code up similar components in your own style.Luckily, Builder has this capability, too, with our Chrome extension. I found a “Featured Posts” section on OpenAI’s website, where I like how the layout and scrolling work. We can copy and paste it onto our “Featured Treats” section, retaining our cafe’s distinctive brand style.Don’t worry—OpenAI doesn’t mind a little web scraping.You can do this with any component on any website, so your own projects can very quickly become a “best of the web” if you know what you’re doing.Plus, you can use Figma designs in much the same way, with even better design fidelity. Copy and paste a Figma frame with our Figma plugin, and tell the AI to either use the component as inspiration or as a 1:1 to reference for what the design should be.Now, we’re ready to send our PR. This time, let’s take a closer look at the code the AI has created.As you can see, the code is neatly formatted into two reusable components. Scrolling down further, I find a CSS file and then the actual implementation on the homepage, with clean JSON to represent the dummy post data.Design tweaks to the mockup with visual editsOne issue that cropped up when the AI brought in the OpenAI layout is that it changed my text from “Featured Treats” to “Featured Stories & Treats.” I’ve realized I don’t like either, and I want to replace that text with: “Fresh Out of the Bakery.”It would be silly, though, to prompt the AI just for this small tweak. Let’s switch into edit mode.Edit Mode lets you select any component and change any of its content or underlying CSS directly. You get a host of Webflow-like options to choose from, so that you can finesse the details as needed.Once you’ve made all the visual changes you want—maybe tweaking a button color or a border radius—you can click “Apply Edits,” and the AI will ensure the underlying code matches your repo’s style.Async fixes to the mockup with Builder BotNow, our pull request is nearly ready to merge, but I found one issue with it:When we copied the OpenAI website layout earlier, one of the blog posts had a video as its featured graphic instead of just an image. This is cool for OpenAI, but for our bakery, I just wanted images in this section. Since I didn’t instruct Builder’s AI otherwise, it went ahead and followed the layout and created extra code for video capability.No problem. We can fix this inside GItHub with our final prompt. We just need to comment on the PR and tag builderio-bot. Within about a minute, Builder Bot has successfully removed the video functionality, leaving a minimal diff that affects only the code it needed to. For example: Returning to my project in Builder, I can see that the bot’s changes are accounted for in the chat window as well, and I can use the live preview link to make sure my site works as expected:Now, if this were a real project, you could easily deploy this to the web for your client. After all, you’ve got a whole GitHub repo. This isn’t just a mockup; it’s actual code you can tweak—with Builder or Cursor or by hand—until you’re satisfied to run the site in production.So, why use Builder to mock up your website?Sure, this has been a somewhat contrived example. A real prototype is going to look prettier, because I’m going to spend more time on pieces of the design that I don’t like as much.But that’s the point of the best AI tools: they don’t take you, the human, out of the loop.You still get to make all the executive decisions, and it respects your hard work. Since you can constantly see all the code the AI creates, work in branches, and prompt with component-level precision, you can stop worrying about AI overwriting your opinions and start using it more as the tool it’s designed to be.You can copy in your team’s Figma designs, import web inspos, connect MCP servers to get Jira tickets in hand, and—most importantly—work with existing repos full of existing styles that Builder will understand and match, just like it matched OpenAI’s layout to our little cafe.So, we get speed, flexibility, and interactivity all the way from prompt to PR to production.Try Builder today.

#mock #website #five #promptsMock up a website in five prompts

“Wait, can users actually add products to the cart?”Every prototype faces that question or one like it. You start to explain it’s “just Figma,” “just dummy data,” but what if you didn’t need disclaimers?What if you could hand clients—or your team—a working, data-connected mock-up of their website, or new pages and components, in less time than it takes to wireframe?That’s the challenge we’ll tackle today. But first, we need to look at:The problem with today’s prototyping toolsPick two: speed, flexibility, or interactivity.The prototyping ecosystem, despite having amazing software that addresses a huge variety of needs, doesn’t really have one tool that gives you all three.Wireframing apps let you draw boxes in minutes but every button is fake. Drag-and-drop builders animate scroll triggers until you ask for anything off-template. Custom code frees you… after you wave goodbye to a few afternoons.AI tools haven’t smashed the trade-off; they’ve just dressed it in flashier costumes. One prompt births a landing page, the next dumps a 2,000-line, worse-than-junior-level React file in your lap. The bottleneck is still there. Builder’s approach to website mockupsWe’ve been trying something a little different to maintain speed, flexibility, and interactivity while mocking full websites. Our AI-driven visual editor:Spins up a repo in seconds or connects to your existing one to use the code as design inspiration. React, Vue, Angular, and Svelte all work out of the box.

Lets you shape components via plain English, visual edits, copy/pasted Figma frames, web inspos, MCP tools, and constant visual awareness of your entire website.

Commits each change as a clean GitHub pull request your team can review like hand-written code. All your usual CI checks and lint rules apply.And if you need a tweak, you can comment to @builderio-bot right in the GitHub PR to make asynchronous changes without context switching.This results in a live site the café owner can interact with today, and a branch your devs can merge tomorrow. Stakeholders get to click actual buttons and trigger real state—no more “so, just imagine this works” demos.Let’s see it in action.From blank canvas to working mockup in five promptsToday, I’m going to mock up a fake business website. You’re welcome to create a real one.Before we fire off a single prompt, grab a note and write:Business name & vibe

Core pages

Primary goal

Brand palette & toneThat’s it. Don’t sweat the details—we can always iterate. For mine, I wrote:1. Sunny Trails Bakery — family-owned, feel-good, smells like warm cinnamon.

2. Home, About, Pricing / Subscription Box, Menu.

3. Drive online orders and foot traffic—every CTA should funnel toward “Order Now” or “Reserve a Table.”

4. Warm yellow, chocolate brown, rounded typography, playful copy.We’re not trying to fit everything here. What matters is clarity on what we’re creating, so the AI has enough context to produce usable scaffolds, and so later tweaks stay aligned with the client’s vision. Builder will default to using React, Vite, and Tailwind. If you want a different JS framework, you can link an existing repo in that stack. In the near future, you won’t need to do this extra step to get non-React frameworks to function.An entire website from the first promptNow, we’re ready to get going.Head over to Builder.io and paste in this prompt or your own:Create a cozy bakery website called “Sunny Trails Bakery” with pages for:

• Home

• About

• Pricing

• Menu

Brand palette: warm yellow and chocolate brown. Tone: playful, inviting. The restaurant is family-owned, feel-good, and smells like cinnamon.

The goal of this site is to drive online orders and foot traffic—every CTA should funnel toward "Order Now" or "Reserve a Table."Once you hit enter, Builder will spin up a new dev container, and then inside that container, the AI will build out the first version of your site. You can leave the page and come back when it’s done.Now, before we go further, let’s create our repo, so that we get version history right from the outset. Click “Create Repo” up in the top right, and link your GitHub account.Once the process is complete, you’ll have a brand new repo.If you need any help on this step, or any of the below, check out these docs.Making the mockup’s order system workFrom our one-shot prompt, we’ve already got a really nice start for our client. However, when we press the “Order Now” button, we just get a generic alert. Let’s fix this.The best part about connecting to GitHub is that we get version control. Head back to your dashboard and edit the settings of your new project. We can give it a better name, and then, in the “Advanced” section, we can change the “Commit Mode” to “Pull Requests.”Now, we have the ability to create new branches right within Builder, allowing us to make drastic changes without worrying about the main version. This is also helpful if you’d like to show your client or team a few different versions of the same prototype.On a new branch, I’ll write another short prompt:Can you make the "Order Now" button work, even if it's just with dummy JSON for now?As you can see in the GIF above, Builder creates an ordering system and a fully mobile-responsive cart and checkout flow.Now, we can click “Send PR” in the top right, and we have an ordinary GitHub PR that can be reviewed and merged as needed.This is what’s possible in two prompts. For our third, let’s gussy up the style.If you’re like me, you might spend a lot of time admiring other people’s cool designs and learning how to code up similar components in your own style.Luckily, Builder has this capability, too, with our Chrome extension. I found a “Featured Posts” section on OpenAI’s website, where I like how the layout and scrolling work. We can copy and paste it onto our “Featured Treats” section, retaining our cafe’s distinctive brand style.Don’t worry—OpenAI doesn’t mind a little web scraping.You can do this with any component on any website, so your own projects can very quickly become a “best of the web” if you know what you’re doing.Plus, you can use Figma designs in much the same way, with even better design fidelity. Copy and paste a Figma frame with our Figma plugin, and tell the AI to either use the component as inspiration or as a 1:1 to reference for what the design should be.Now, we’re ready to send our PR. This time, let’s take a closer look at the code the AI has created.As you can see, the code is neatly formatted into two reusable components. Scrolling down further, I find a CSS file and then the actual implementation on the homepage, with clean JSON to represent the dummy post data.Design tweaks to the mockup with visual editsOne issue that cropped up when the AI brought in the OpenAI layout is that it changed my text from “Featured Treats” to “Featured Stories & Treats.” I’ve realized I don’t like either, and I want to replace that text with: “Fresh Out of the Bakery.”It would be silly, though, to prompt the AI just for this small tweak. Let’s switch into edit mode.Edit Mode lets you select any component and change any of its content or underlying CSS directly. You get a host of Webflow-like options to choose from, so that you can finesse the details as needed.Once you’ve made all the visual changes you want—maybe tweaking a button color or a border radius—you can click “Apply Edits,” and the AI will ensure the underlying code matches your repo’s style.Async fixes to the mockup with Builder BotNow, our pull request is nearly ready to merge, but I found one issue with it:When we copied the OpenAI website layout earlier, one of the blog posts had a video as its featured graphic instead of just an image. This is cool for OpenAI, but for our bakery, I just wanted images in this section. Since I didn’t instruct Builder’s AI otherwise, it went ahead and followed the layout and created extra code for video capability.No problem. We can fix this inside GItHub with our final prompt. We just need to comment on the PR and tag builderio-bot. Within about a minute, Builder Bot has successfully removed the video functionality, leaving a minimal diff that affects only the code it needed to. For example: Returning to my project in Builder, I can see that the bot’s changes are accounted for in the chat window as well, and I can use the live preview link to make sure my site works as expected:Now, if this were a real project, you could easily deploy this to the web for your client. After all, you’ve got a whole GitHub repo. This isn’t just a mockup; it’s actual code you can tweak—with Builder or Cursor or by hand—until you’re satisfied to run the site in production.So, why use Builder to mock up your website?Sure, this has been a somewhat contrived example. A real prototype is going to look prettier, because I’m going to spend more time on pieces of the design that I don’t like as much.But that’s the point of the best AI tools: they don’t take you, the human, out of the loop.You still get to make all the executive decisions, and it respects your hard work. Since you can constantly see all the code the AI creates, work in branches, and prompt with component-level precision, you can stop worrying about AI overwriting your opinions and start using it more as the tool it’s designed to be.You can copy in your team’s Figma designs, import web inspos, connect MCP servers to get Jira tickets in hand, and—most importantly—work with existing repos full of existing styles that Builder will understand and match, just like it matched OpenAI’s layout to our little cafe.So, we get speed, flexibility, and interactivity all the way from prompt to PR to production.Try Builder today.

#mock #website #five #prompts