In a world where smartphones have become extensions of our very beings, it seems only fitting that the latest buzz is about none other than the Trump Mobile and its dazzling Gold T1 smartphone. Yes, you heard that right – a phone that’s as golden as its namesake’s aspirations and, arguably, just as inflated!

Let’s dive into the nine *urgent* questions we all have about this technological marvel. First on the list: Is it true that the Trump Mobile can only connect to social media platforms that feature a certain orange-tinted filter? Because if it doesn’t, what’s the point, really? We all know that a phone’s worth is measured by its ability to curate the perfect image, preferably one that makes the user look like a billion bucks—just like the former president himself.

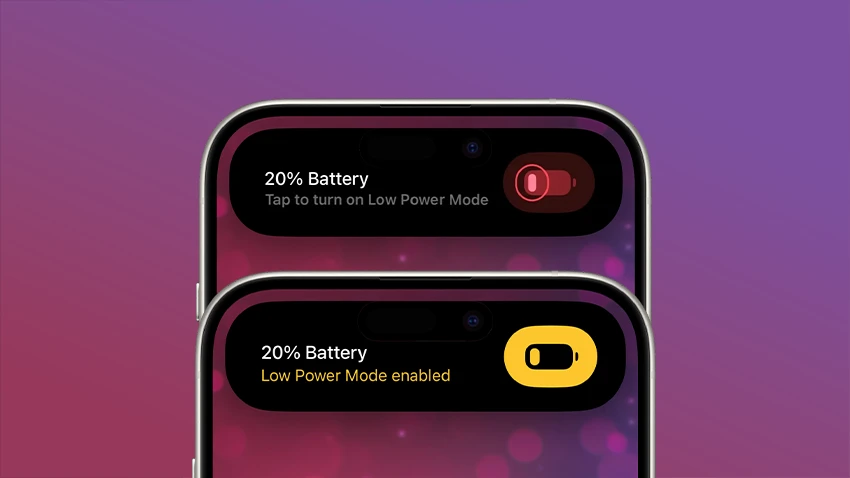

And while we’re on the topic of money, can we talk about the Gold T1’s price tag? Rumor has it that it’s priced like a luxury yacht, but comes with the battery life of a damp sponge. A perfect combo for those who wish to flaunt their wealth while simultaneously being unable to scroll through their Twitter feed without a panic attack when the battery drops to 1%.

Now, let’s not forget about the *data plan*. Is it true that the plan includes unlimited access to news outlets that only cover “the best” headlines? Because if I can’t get my daily dose of “Trump is the best” articles, then what’s the point of having a phone that’s practically a golden trophy? I can just see the commercials now: “Get your Trump Mobile and never miss an opportunity to revel in your own glory!”

Furthermore, what about the customer service? One can only imagine calling for assistance and getting a voicemail that says, “We’re busy making America great again, please leave a message after the beep.” If you’re lucky, you might get a callback… in a week, or perhaps never. After all, who needs help when you have a phone that’s practically an icon of success?

Let’s also discuss the design. Is it true that the Gold T1 comes with a built-in mirror so you can admire yourself while pretending to check your messages? Because nothing screams “I’m important” like a smartphone that encourages narcissism at every glance.

And what about the camera? Will it have a special feature that automatically enhances your selfies to ensure you look as good as the carefully curated versions of yourself? I mean, we can’t have anything less than perfection when it comes to our online personas, can we?

In conclusion, while the Trump Mobile and Gold T1 smartphone might promise a new era of connectivity and self-admiration, one can only wonder if it’s all a glittery façade hiding a less-than-stellar user experience. But hey, for those who’ve always dreamt of owning a piece of tech that’s as bold and brash as its namesake, this might just be the device for you!

#TrumpMobile #GoldT1 #SmartphoneHumor #TechSatire #DigitalNarcissismIn a world where smartphones have become extensions of our very beings, it seems only fitting that the latest buzz is about none other than the Trump Mobile and its dazzling Gold T1 smartphone. Yes, you heard that right – a phone that’s as golden as its namesake’s aspirations and, arguably, just as inflated!

Let’s dive into the nine *urgent* questions we all have about this technological marvel. First on the list: Is it true that the Trump Mobile can only connect to social media platforms that feature a certain orange-tinted filter? Because if it doesn’t, what’s the point, really? We all know that a phone’s worth is measured by its ability to curate the perfect image, preferably one that makes the user look like a billion bucks—just like the former president himself.

And while we’re on the topic of money, can we talk about the Gold T1’s price tag? Rumor has it that it’s priced like a luxury yacht, but comes with the battery life of a damp sponge. A perfect combo for those who wish to flaunt their wealth while simultaneously being unable to scroll through their Twitter feed without a panic attack when the battery drops to 1%.

Now, let’s not forget about the *data plan*. Is it true that the plan includes unlimited access to news outlets that only cover “the best” headlines? Because if I can’t get my daily dose of “Trump is the best” articles, then what’s the point of having a phone that’s practically a golden trophy? I can just see the commercials now: “Get your Trump Mobile and never miss an opportunity to revel in your own glory!”

Furthermore, what about the customer service? One can only imagine calling for assistance and getting a voicemail that says, “We’re busy making America great again, please leave a message after the beep.” If you’re lucky, you might get a callback… in a week, or perhaps never. After all, who needs help when you have a phone that’s practically an icon of success?

Let’s also discuss the design. Is it true that the Gold T1 comes with a built-in mirror so you can admire yourself while pretending to check your messages? Because nothing screams “I’m important” like a smartphone that encourages narcissism at every glance.

And what about the camera? Will it have a special feature that automatically enhances your selfies to ensure you look as good as the carefully curated versions of yourself? I mean, we can’t have anything less than perfection when it comes to our online personas, can we?

In conclusion, while the Trump Mobile and Gold T1 smartphone might promise a new era of connectivity and self-admiration, one can only wonder if it’s all a glittery façade hiding a less-than-stellar user experience. But hey, for those who’ve always dreamt of owning a piece of tech that’s as bold and brash as its namesake, this might just be the device for you!

#TrumpMobile #GoldT1 #SmartphoneHumor #TechSatire #DigitalNarcissism