0 Commentarii

0 Distribuiri

165 Views

Director

Director

-

Vă rugăm să vă autentificați pentru a vă dori, partaja și comenta!

-

WWW.NINTENDOLIFE.COMOpinion: It's Not My GOTY, But This Nintendo Game Was A Standout 2024 MemoryImage: Nile BowieIll admit, Ive never been fond of speedrunning. The thought of shaving milliseconds off a time in a video game while playing the same sequence over and over sounds, in theory, more frustrating than fun. Sure, as a spectator, there is definitely joy in appreciating the wizardry of players pulling off pixel-perfect feats and shattering a runtime record. But I have neither the time nor the mettle to contemplate doing so myself.Fortunately, this year gave us a game that weaved together 8-bit era charm, bite-sized accessibility, and addictive skill refinement. Nintendo World Championships: NES Edition was my gateway to finally getting speedrunning, and its some of the most fun Ive had gaming in 2024.Subscribe to Nintendo Life on YouTube789kWatch on YouTube Its the only game Ive played this year that resulted in me letting out an involuntary shout of triumph when I bested my competitors in consecutive speedrunning challenges, earning myself a (screenshot of a) gold trophy. It was sweet, sweet consolation for all the IRL trophies I failed to win throughout my life, and so it brought me immense satisfaction.Image: Nile BowieJust to recap, the game was released in July and features condensed speedrunning challenges extracted from 13 iconic NES titles. Its World Championship Mode allows for placement on a weekly leaderboard while the Survival Mode pits you against other players' ghost times across three challenges to avoid elimination. Theres also an eight-player party mode.The game received solid but not glowing reviews (Nintendo Life gave it a 7/10). Some critics deem it less ambitious and creative than developer indieszeros previous retro compilations, the quirky NES Remix series on Wii U and 3DS. I wouldn't necessarily dispute that but I found NWC's focus on pure speedrunning challenges to be meticulously well-executed. Speed demonPart of what makes it so difficult to put down is its snappy gamefeel and sound design. Each challenge begins with a whistle countdown and ends with a satisfying pop and synth effect in a way that tugs your dopamine receptors to smash 'Play again' to improve your PB. Its an even better experience playing with an NES controller for the as-intended button feel.Though it might not be my Game of the Year (that one goes to Animal Well), it brought out the competitor in me and ultimately made me a better player. While weve all played our share of NES games over the years, World Championships emphasis on mastery helped me discover the deep control scheme nuances of classic titles in a way I hadnt before.Take, for example, Zelda II: The Adventure of Link, a game Id barely touched before this due in part to its infamous difficulty. Working my way through its speedrunning challenges helped me come to grips with the games punishing combat. Some of its challenges, like the Master-rank 'Neigh Slayer', initially felt impossible to complete with an S-tier time.Images: Nile BowieThat one in particular involves beating a horse-headed boss with precisely timed aerial sword slices, a move that eventually felt intuitive with practice. When I later fired up the original version of Zelda II on Nintendo Switch Online, I found myself enjoying the game far more than I ever had before because World Championships had essentially shown me the ropes.Even games I know fairly well, like Super Mario Bros., Excitebike, and Kirbys Adventure, had new layers revealed with help from the Nintendo Power-inspired 'Classified Information' sheets, which cover the most efficient routes, combat strategies, and movement tricks. I came away with a deeper appreciation of the craftsmanship behind these classics.For several months, I've tackled its weekly challenges religiously, even managing to top the leaderboard (once). I find the games competitive modes perfect for zeroing in on the specific challenges on offer every seven days. Focusing on the handful of challenges each week helped me push the envelope and consistently improve my personal best timings.Images: Nile BowieWatching the weekly replays of the top players has been equally fascinating (Nintendo has since put up a website showing off each weeks top performances). Seeing their precision and efficiency in action is humbling and sometimes surprising: there was a small controversy early on when a cheeky player exploited a glitch in Donkey Kong that few people knew existed.The games engagement has understandably waned in the months since its release, with fewer taking part in weekly competitions. While its a long shot, Ive still got my fingers crossed for DLC content. Punch-Out!! is a glaring first-party omission, and the inclusion of landmark third-party NES classics from the Contra, Castlevania, or Mega Man series would draw players back if agreements could be made with Konami or Capcom.One of the coolest things the developers could add is a new standalone challenge based on the actual 1990 Nintendo World Championships custom cartridge (among the rarest NES collectibles that sell for tens of thousands of dollars), which tracks the cumulative scores of three Super Mario Bros., Rad Racer, and Tetris played in sequence. Incorporating it feels like a no-brainer. There is also niche demand for it, with various reproductions of the custom cart available, one of which Nintendo has acted against. Give the people what they want, I say.The announcement trailer leaned heavily into the nostalgia for the 1990 eventMany will naturally be pining for an SNES Edition of this title with the same format, just as folks were hoping for an SNES Remix. Its hard to say whether theyll be such a follow-up, but one hopes Nintendo and indieszero dont sit on their hands. Nintendo wont shy away from further celebrations of its legacy, but well likely have to wait for another big anniversary or a big gap between heavy hitters on in the Switch (2) release calendar!As it stands, Nintendo World Championships: NES Edition may not have been the flashiest game of 2024, but, for me, it remains a standout with plenty of untapped potential. Its a loving homage to the glory days of the NES and the 8-bit culture that surrounded it. Most of all, its a celebration of the timeless classics that still shape my love for gaming.Image: Zion Grassl / Nintendo Life Tournament scandalRelated GamesSee AlsoShare:00 Nile Bowie is an American journalist based in Singapore who grew up on Nintendo. When he isnt reporting on politics, business, and international relations, hes very likely playing his Switch, tracking down old Game Boy cartridges, or reading Nintendo Life with a cat on his lap. Hold on there, you need to login to post a comment...Related Articles46 Games You Should Pick Up In The Nintendo Switch eShop Holiday Sale (Europe)Every game we scored 9/10 or higher'Switch 2' Is Projected To Be The "Clear Winner" In The Next Console GenerationWhile either Sony or Microsoft will "struggle mightily"PSA: Switch 2 Is Getting Revealed In The Next 100 DaysSet your AlarmosYour 'Nintendo Switch Year In Review 2024' Stats Are Available NowWhat's your hour count? System:Nintendo SwitchPublisher:NintendoDeveloper:Nintendo, indieszeroGenre:Action, Party, Platformer, RacingPlayers:8 (1 Online)Release Date:Nintendo Switch 18th Jul 2024, $59.99 18th Jul 2024, 49.99Switch eShop 18th Jul 2024, $29.99 18th Jul 2024, 24.99Official Site:nintendo.comWhere to buy:Buy on Amazon Buy eShop Credit:0 Commentarii 0 Distribuiri 159 Views

WWW.NINTENDOLIFE.COMOpinion: It's Not My GOTY, But This Nintendo Game Was A Standout 2024 MemoryImage: Nile BowieIll admit, Ive never been fond of speedrunning. The thought of shaving milliseconds off a time in a video game while playing the same sequence over and over sounds, in theory, more frustrating than fun. Sure, as a spectator, there is definitely joy in appreciating the wizardry of players pulling off pixel-perfect feats and shattering a runtime record. But I have neither the time nor the mettle to contemplate doing so myself.Fortunately, this year gave us a game that weaved together 8-bit era charm, bite-sized accessibility, and addictive skill refinement. Nintendo World Championships: NES Edition was my gateway to finally getting speedrunning, and its some of the most fun Ive had gaming in 2024.Subscribe to Nintendo Life on YouTube789kWatch on YouTube Its the only game Ive played this year that resulted in me letting out an involuntary shout of triumph when I bested my competitors in consecutive speedrunning challenges, earning myself a (screenshot of a) gold trophy. It was sweet, sweet consolation for all the IRL trophies I failed to win throughout my life, and so it brought me immense satisfaction.Image: Nile BowieJust to recap, the game was released in July and features condensed speedrunning challenges extracted from 13 iconic NES titles. Its World Championship Mode allows for placement on a weekly leaderboard while the Survival Mode pits you against other players' ghost times across three challenges to avoid elimination. Theres also an eight-player party mode.The game received solid but not glowing reviews (Nintendo Life gave it a 7/10). Some critics deem it less ambitious and creative than developer indieszeros previous retro compilations, the quirky NES Remix series on Wii U and 3DS. I wouldn't necessarily dispute that but I found NWC's focus on pure speedrunning challenges to be meticulously well-executed. Speed demonPart of what makes it so difficult to put down is its snappy gamefeel and sound design. Each challenge begins with a whistle countdown and ends with a satisfying pop and synth effect in a way that tugs your dopamine receptors to smash 'Play again' to improve your PB. Its an even better experience playing with an NES controller for the as-intended button feel.Though it might not be my Game of the Year (that one goes to Animal Well), it brought out the competitor in me and ultimately made me a better player. While weve all played our share of NES games over the years, World Championships emphasis on mastery helped me discover the deep control scheme nuances of classic titles in a way I hadnt before.Take, for example, Zelda II: The Adventure of Link, a game Id barely touched before this due in part to its infamous difficulty. Working my way through its speedrunning challenges helped me come to grips with the games punishing combat. Some of its challenges, like the Master-rank 'Neigh Slayer', initially felt impossible to complete with an S-tier time.Images: Nile BowieThat one in particular involves beating a horse-headed boss with precisely timed aerial sword slices, a move that eventually felt intuitive with practice. When I later fired up the original version of Zelda II on Nintendo Switch Online, I found myself enjoying the game far more than I ever had before because World Championships had essentially shown me the ropes.Even games I know fairly well, like Super Mario Bros., Excitebike, and Kirbys Adventure, had new layers revealed with help from the Nintendo Power-inspired 'Classified Information' sheets, which cover the most efficient routes, combat strategies, and movement tricks. I came away with a deeper appreciation of the craftsmanship behind these classics.For several months, I've tackled its weekly challenges religiously, even managing to top the leaderboard (once). I find the games competitive modes perfect for zeroing in on the specific challenges on offer every seven days. Focusing on the handful of challenges each week helped me push the envelope and consistently improve my personal best timings.Images: Nile BowieWatching the weekly replays of the top players has been equally fascinating (Nintendo has since put up a website showing off each weeks top performances). Seeing their precision and efficiency in action is humbling and sometimes surprising: there was a small controversy early on when a cheeky player exploited a glitch in Donkey Kong that few people knew existed.The games engagement has understandably waned in the months since its release, with fewer taking part in weekly competitions. While its a long shot, Ive still got my fingers crossed for DLC content. Punch-Out!! is a glaring first-party omission, and the inclusion of landmark third-party NES classics from the Contra, Castlevania, or Mega Man series would draw players back if agreements could be made with Konami or Capcom.One of the coolest things the developers could add is a new standalone challenge based on the actual 1990 Nintendo World Championships custom cartridge (among the rarest NES collectibles that sell for tens of thousands of dollars), which tracks the cumulative scores of three Super Mario Bros., Rad Racer, and Tetris played in sequence. Incorporating it feels like a no-brainer. There is also niche demand for it, with various reproductions of the custom cart available, one of which Nintendo has acted against. Give the people what they want, I say.The announcement trailer leaned heavily into the nostalgia for the 1990 eventMany will naturally be pining for an SNES Edition of this title with the same format, just as folks were hoping for an SNES Remix. Its hard to say whether theyll be such a follow-up, but one hopes Nintendo and indieszero dont sit on their hands. Nintendo wont shy away from further celebrations of its legacy, but well likely have to wait for another big anniversary or a big gap between heavy hitters on in the Switch (2) release calendar!As it stands, Nintendo World Championships: NES Edition may not have been the flashiest game of 2024, but, for me, it remains a standout with plenty of untapped potential. Its a loving homage to the glory days of the NES and the 8-bit culture that surrounded it. Most of all, its a celebration of the timeless classics that still shape my love for gaming.Image: Zion Grassl / Nintendo Life Tournament scandalRelated GamesSee AlsoShare:00 Nile Bowie is an American journalist based in Singapore who grew up on Nintendo. When he isnt reporting on politics, business, and international relations, hes very likely playing his Switch, tracking down old Game Boy cartridges, or reading Nintendo Life with a cat on his lap. Hold on there, you need to login to post a comment...Related Articles46 Games You Should Pick Up In The Nintendo Switch eShop Holiday Sale (Europe)Every game we scored 9/10 or higher'Switch 2' Is Projected To Be The "Clear Winner" In The Next Console GenerationWhile either Sony or Microsoft will "struggle mightily"PSA: Switch 2 Is Getting Revealed In The Next 100 DaysSet your AlarmosYour 'Nintendo Switch Year In Review 2024' Stats Are Available NowWhat's your hour count? System:Nintendo SwitchPublisher:NintendoDeveloper:Nintendo, indieszeroGenre:Action, Party, Platformer, RacingPlayers:8 (1 Online)Release Date:Nintendo Switch 18th Jul 2024, $59.99 18th Jul 2024, 49.99Switch eShop 18th Jul 2024, $29.99 18th Jul 2024, 24.99Official Site:nintendo.comWhere to buy:Buy on Amazon Buy eShop Credit:0 Commentarii 0 Distribuiri 159 Views -

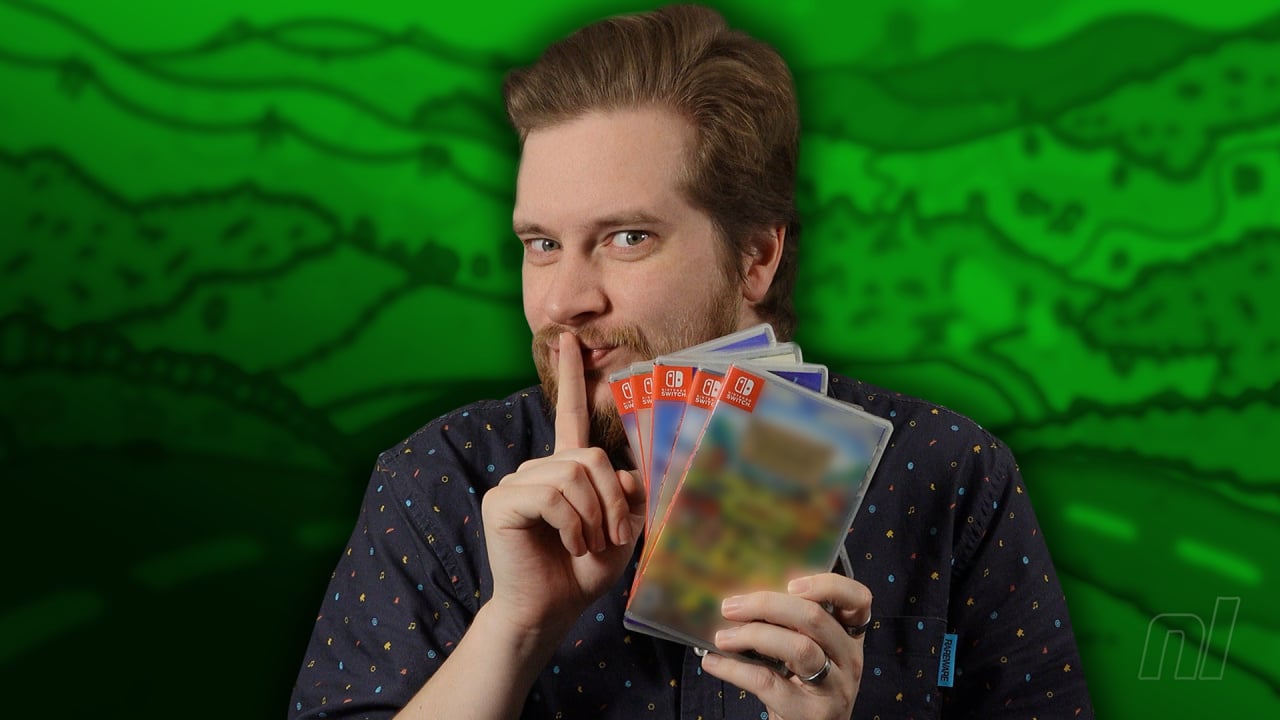

WWW.NINTENDOLIFE.COMVideo: Here Are Alex's Top 5 Nintendo Switch Games Of 2024Subscribe to Nintendo Life on YouTube789kWatch on YouTube Third time's a charm, as they say. Yes, we're back with our third and final look at our video team's top five Switch games of 2024.It's worth repeating here that these aren't necessarily games that launched in 2024, but are merely games that the video chaps played in 2024. You'll see why we felt the need to reiterate that when you see what the lovely Alex has chosen for his list of games. Some new, some old(er), but all of them are absolute whoppers.In case you missed our previous videos, here's a reminder of what Felix and Zion chose for their respective lists:Felix Felix NavidadZion Zi-on the first day of Christmas So there you have it! What do you make of the chaps' choices for this year? Does your own GotY for 2024 feature in any of these videos? Let us know.Related GamesSee AlsoShare:01 Nintendo Lifes resident horror fanatic, when hes not knee-deep in Resident Evil and Silent Hill lore, Ollie likes to dive into a good horror book while nursing a lovely cup of tea. He also enjoys long walks and listens to everything from TOOL to Chuck Berry. Hold on there, you need to login to post a comment...Related ArticlesToby Fox Shares Another Development Update On Deltarune Chapter 3, 4 & 5"Progress has still been steady"46 Games You Should Pick Up In The Nintendo Switch eShop Holiday Sale (Europe)Every game we scored 9/10 or higher'Switch 2' Is Projected To Be The "Clear Winner" In The Next Console GenerationWhile either Sony or Microsoft will "struggle mightily"PSA: Switch 2 Is Getting Revealed In The Next 100 DaysSet your Alarmos0 Commentarii 0 Distribuiri 151 Views

WWW.NINTENDOLIFE.COMVideo: Here Are Alex's Top 5 Nintendo Switch Games Of 2024Subscribe to Nintendo Life on YouTube789kWatch on YouTube Third time's a charm, as they say. Yes, we're back with our third and final look at our video team's top five Switch games of 2024.It's worth repeating here that these aren't necessarily games that launched in 2024, but are merely games that the video chaps played in 2024. You'll see why we felt the need to reiterate that when you see what the lovely Alex has chosen for his list of games. Some new, some old(er), but all of them are absolute whoppers.In case you missed our previous videos, here's a reminder of what Felix and Zion chose for their respective lists:Felix Felix NavidadZion Zi-on the first day of Christmas So there you have it! What do you make of the chaps' choices for this year? Does your own GotY for 2024 feature in any of these videos? Let us know.Related GamesSee AlsoShare:01 Nintendo Lifes resident horror fanatic, when hes not knee-deep in Resident Evil and Silent Hill lore, Ollie likes to dive into a good horror book while nursing a lovely cup of tea. He also enjoys long walks and listens to everything from TOOL to Chuck Berry. Hold on there, you need to login to post a comment...Related ArticlesToby Fox Shares Another Development Update On Deltarune Chapter 3, 4 & 5"Progress has still been steady"46 Games You Should Pick Up In The Nintendo Switch eShop Holiday Sale (Europe)Every game we scored 9/10 or higher'Switch 2' Is Projected To Be The "Clear Winner" In The Next Console GenerationWhile either Sony or Microsoft will "struggle mightily"PSA: Switch 2 Is Getting Revealed In The Next 100 DaysSet your Alarmos0 Commentarii 0 Distribuiri 151 Views -

TECHCRUNCH.COMThe TechCrunch Cyber GlossaryThe cybersecurity world is full of technical lingo and jargon. At TechCrunch, we have been writing about cybersecurity for years, and even we sometimes need a refresher on what exactly a specific word or expression means. Thats why we have created this glossary, which includes some of the most common and not so common words and expressions that we use in our articles, and explanations of how, and why, we use them.This is a developing compendium, and we will update it regularly.Advanced persistent threat (APT)An advanced persistent threat (APT) is often categorized as a hacker, or group of hackers, which gains and maintains unauthorized access to a targeted system. The main aim of an APT intruder is to remain undetected for long periods of time, often to conduct espionage and surveillance, to steal data, or sabotage critical systems.APTs are traditionally well-resourced hackers, including the funding to pay for their malicious campaigns, and access to hacking tools typically reserved by governments. As such, many of the long-running APT groups are associated with nation states, like China, Iran, North Korea, and Russia. In recent years, weve seen examples of non-nation state cybercriminal groups that are financially motivated (such as theft and money laundering) carrying out cyberattacks similar in terms of persistence and capabilities as some traditional government-backed APT groups.(See: Hacker)Arbitrary code executionThe ability to run commands or malicious code on an affected system, often because of a security vulnerability in the systems software. Arbitrary code execution can be achieved either remotely or with physical access to an affected system (such as someones device). In the cases where arbitrary code execution can be achieved over the internet, security researchers typically call this remote code execution.Often, code execution is used as a way to plant a back door for maintaining long-term and persistent access to that system, or for running malware that can be used to access deeper parts of the system or other devices on the same network.(See also: Remote code execution)Black/white hatHackers historically have been categorized as either black hat or white hat, usually depending on the motivations of the hacking activity carried out. A black hat hacker may be someone who might break the law and hack for money or personal gain, such as a cybercriminal. White hat hackers generally hack within legal bounds, like as part of a penetration test sanctioned by the target company, or to collect bug bounties finding flaws in various software and disclosing them to the affected vendor. For those who hack with less clearcut motivations, they may be regarded as a gray hat. Famously, the hacking group the L0pht used the term gray hat in an interview with The New York Times Magazine in 1999. While still commonly used in modern security parlance, many have moved away from the hat terminology.(Also see: Hacker, Hacktivist)BotnetBotnets are networks of hijacked internet-connected devices, such as webcams and home routers, that have been compromised by malware (or sometimes weak or default passwords) for the purposes of being used in cyberattacks. Botnets can be made up of hundreds or thousands of devices and are typically controlled by a command-and-control server that sends out commands to ensnared devices. Botnets can be used for a range of malicious reasons, like using the distributed network of devices to mask and shield the internet traffic of cybercriminals, deliver malware, or harness their collective bandwidth to maliciously crash websites and online services with huge amounts of junk internet traffic.(See also: Command-and-control server; Distributed denial-of-service)BugA bug is essentially the cause of a software glitch, such as an error or a problem that causes the software to crash or behave in an unexpected way. In some cases, a bug can also be a security vulnerability.The term bug originated in 1947, at a time when early computers were the size of rooms and made up of heavy mechanical and moving equipment. The first known incident of a bug found in a computer was when a moth disrupted the electronics of one of these room-sized computers.(See also: Vulnerability)Command-and-control (C2) serverCommand-and-control servers (also known as C2 servers) are used by cybercriminals to remotely manage and control their fleets of compromised devices and launch cyberattacks, such as delivering malware over the internet and launching distributed denial-of-service attacks.(See also: Botnet; Distributed denial-of-service)CryptojackingCryptojacking is when a devices computational power is used, with or without the owners permission, to generate cryptocurrency. Developers sometimes bundle code in apps and on websites, which then uses the devices processors to complete complex mathematical calculations needed to create new cryptocurrency. The generated cryptocurrency is then deposited in virtual wallets owned by the developer.Some malicious hackers use malware to deliberately compromise large numbers of unwitting computers to generate cryptocurrency on a large and distributed scale.Data breachWhen we talk about data breaches, we ultimately mean the improper removal of data from where it should have been. But the circumstances matter and can alter the terminology we use to describe a particular incident.A data breach is when protected data was confirmed to have improperly left a system from where it was originally stored and usually confirmed when someone discovers the compromised data. More often than not, were referring to the exfiltration of data by a malicious cyberattacker or otherwise detected as a result of an inadvertent exposure. Depending on what is known about the incident, we may describe it in more specific terms where details are known.(See also: Data exposure; Data leak)Data exposureA data exposure (a type of data breach) is when protected data is stored on a system that has no access controls, such as because of human error or a misconfiguration. This might include cases where a system or database is connected to the internet but without a password. Just because data was exposed doesnt mean the data was actively discovered, but nevertheless could still be considered a data breach.Data leakA data leak (a type of data breach) is where protected data is stored on a system in a way that it was allowed to escape, such as due to a previously unknown vulnerability in the system or by way of insider access (such as an employee). A data leak can mean that data could have been exfiltrated or otherwise collected, but there may not always be the technical means, such as logs, to know for sure.Distributed denial-of-service (DDoS)A distributed denial-of-service, or DDoS, is a kind of cyberattack that involves flooding targets on the internet with junk web traffic in order to overload and crash the servers and cause the service, such as a website, online store, or gaming platform to go down.DDoS attacks are launched by botnets, which are made up of networks of hacked internet-connected devices (such as home routers and webcams) that can be remotely controlled by a malicious operator, usually from a command-and-control server. Botnets can be made up of hundreds or thousands of hijacked devices.While a DDoS is a form of cyberattack, these data-flooding attacks are not hacks in themselves, as they dont involve the breach and exfiltration of data from their targets, but instead cause a denial of service event to the affected service.(See also: Botnet; Command-and-control server)EncryptionEncryption is the way and means in which information, such as files, documents, and private messages, are scrambled to make the data unreadable to anyone other than to its intended owner or recipient. Encrypted data is typically scrambled using an encryption algorithm essentially a set of mathematical formulas that determines howNearly all modern encryption algorithms in use today are open source, allowing anyone (including security professionals and cryptographers) to review and check the algorithm to make sure its free of faults or flaws. Some encryption algorithms are stronger than others, meaning data protected by some weaker algorithms can be decrypted by harnessing large amounts of computational power.Encryption is different from encoding, which simply converts data into a different and standardized format, usually for the benefit of allowing computers to read the data.(See also: End-to-end encryption)End-to-end encryption (E2EE)End-to-end encryption (or E2EE) is a security feature built into many messaging and file-sharing apps, and is widely considered one of the strongest ways of securing digital communications as they traverse the internet.E2EE scrambles the file or message on the senders device before its sent in a way that allows only the intended recipient to decrypt its contents, making it near-impossible for anyone including a malicious hacker, or even the app maker to snoop inside on someones private communications. In recent years, E2EE has become the default security standard for many messaging apps, including Apples iMessage, Facebook Messenger, Signal, and WhatsApp.E2EE has also become the subject of governmental frustration in recent years, as encryption makes it impossible for tech companies or app providers to give over information that they themselves do not have access to.(See also: Encryption)Escalation of privilegesMost modern systems are protected with multiple layers of security, including the ability to set user accounts with more restricted access to the underlying systems configurations and settings. This prevents these users or anyone with improper access to one of these user accounts from tampering with the core underlying system. However, an escalation of privileges event can involve exploiting a bug or tricking the system into granting the user more access rights than they should have.Malware can also take advantage of bugs or flaws caused by escalation of privileges by gaining deeper access to a device or a connected network, potentially allowing the malware to spread.ExploitAn exploit is the way and means in which a vulnerability is abused or taken advantage of, usually in order to break into a system.(See also: Bug; Vulnerability)ExtortionIn general terms, extortion is the act of obtaining something, usually money, through the use of force and intimidation. Cyber extortion is no different, as it typically refers to a category of cybercrime whereby attackers demand payment from victims by threatening to damage, disrupt, or expose their sensitive information.Extortion is often used in ransomware attacks, where hackers typically exfiltrate company data before demanding a ransom payment from the hacked victim. But extortion has quickly become its own category of cybercrime, with many, often younger, financially motivated hackers, opting to carry out extortion-only attacks, which snub the use of encryption in favor of simple data theft.(Also see: Ransomware)ForensicsForensic investigations involve analyzing data and information contained in a computer, server, or mobile device, looking for evidence of a hack, crime, or some sort of malfeasance. Sometimes, in order to access the data, corporate or law enforcement investigators rely on specialized devices and tools, like those made by Cellebrite and Grayshift, which are designed to unlock and break the security of computers and cellphones to access the data within.HackerThere is no one single definition of hacker. The term has its own rich history, culture, and meaning within the security community. Some incorrectly conflate hackers, or hacking, with wrongdoing.By our definition and use, we broadly refer to a hacker as someone who is a breaker of things, usually by altering how something works to make it perform differently in order to meet their objectives. In practice, that can be something as simple as repairing a machine with non-official parts to make it function differently as intended, or work even better.In the cybersecurity sense, a hacker is typically someone who breaks a system or breaks the security of a system. That could be anything from an internet-connected computer system to a simple door lock. But the persons intentions and motivations (if known) matter in our reporting, and guides how we accurately describe the person, or their activity.There are ethical and legal differences between a hacker who works as a security researcher, who is professionally tasked with breaking into a companys systems with their permission to identify security weaknesses that can be fixed before a malicious individual has a chance to exploit them; and a malicious hacker who gains unauthorized access to a system and steals data without obtaining anyones permission.Because the term hacker is inherently neutral, we generally apply descriptors in our reporting to provide context about who were talking about. If we know that an individual works for a government and is contracted to maliciously steal data from a rival government, were likely to describe them as a nation-state or government hackeradvanced persistent threat), for example. If a gang is known to use malware to steal funds from individuals bank accounts, we may describe them as financially motivated hackers, or if there is evidence of criminality or illegality (such as an indictment), we may describe them simply as cybercriminals.And, if we dont know motivations or intentions, or a person describes themselves as such, we may simply refer to a subject neutrally as a hacker, where appropriate.Hack-and-leak operationSometimes, hacking and stealing data is only the first step. In some cases, hackers then leak the stolen data to journalists, or directly post the data online for anyone to see. The goal can be either to embarrass the hacking victim, or to expose alleged malfeasance.The origins of modern hack-and-leak operations date back to the early- and mid-2000s, when groups like el8, pHC (Phrack High Council) and zf0 were targeting people in the cybersecurity industry who, according to these groups, had foregone the hacker ethos and had sold out. Later, there are the examples of hackers associated with Anonymous and leaking data from U.S. government contractor HBGary, and North Korean hackers leaking emails stolen from Sony as retribution for the Hollywood comedy, The Interview.Some of the most recent and famous examples are the hack against the now-defunct government spyware pioneer Hacking Team in 2015, and the infamous Russian government-led hack-and-leak of Democratic National Committee emails ahead of the 2016 U.S. presidential elections. Iranian government hackers tried to emulate the 2016 playbook during the 2024 elections.HacktivistA particular kind of hacker who hacks for what they and perhaps the public perceive as a good cause, hence the portmanteau of the words hacker and activist. Hacktivism has been around for more than two decades, starting perhaps with groups like the Cult of the Dead Cow in the late 1990s. Since then, there have been several high profile examples of hacktivist hackers and groups, such as Anonymous, LulzSec, and Phineas Fisher.(Also see: Hacker)InfosecShort for information security, an alternative term used to describe defensive cybersecurity focused on the protection of data and information. Infosec may be the preferred term for industry veterans, while the term cybersecurity has become widely accepted. In modern times, the two terms have become largely interchangeable.InfostealersInfostealers are malware capable of stealing information from a persons computer or device. Infostealers are often bundled in pirated software, like Redline, which when installed will primarily seek out passwords and other credentials stored in the persons browser or password manager, then surreptitiously upload the victims passwords to the attackers systems. This lets the attacker sign in using those stolen passwords. Some infostealers are also capable of stealing session tokens from a users browser, which allow the attacker to sign in to a persons online account as if they were that user but without needing their password or multifactor authentication code.(See also: Malware)JailbreakJailbreaking is used in several contexts to mean the use of exploits and other hacking techniques to circumvent the security of a device, or removing the restrictions a manufacturer puts on hardware or software. In the context of iPhones, for example, a jailbreak is a technique to remove Apples restrictions on installing apps outside of its walled garden or to gain the ability to conduct security research on Apple devices, which is normally highly restricted. In the context of AI, jailbreaking means figuring out a way to get a chatbot to give out information that its not supposed to.KernelThe kernel, as its name suggests, is the core part of an operating system that connects and controls virtually all hardware and software. As such, the kernel has the highest level of privileges, meaning it has access to virtually any data on the device. Thats why, for example, apps such as antivirus and anti-cheat software run at the kernel level, as they require broad access to the device. Having kernel access allows these apps to monitor for malicious code.MalwareMalware is a broad umbrella term that describes malicious software. Malware can land in many forms and be used to exploit systems in different ways. As such, malware that is used for specific purposes can often be referred to as its own subcategory. For example, the type of malware used for conducting surveillance on peoples devices is also called spyware, while malware that encrypts files and demands money from its victims is called ransomware.(See also: Infostealers; Ransomware; Spyware)Metadata is information about something digital, rather than its contents. That can include details about the size of a file or document, who created it, and when, or in the case of digital photos, where the image was taken and information about the device that took the photo. Metadata may not identify the contents of a file, but it can be useful in determining where a document came from or who authored it. Metadata can also refer to information about an exchange, such as who made a call or sent a text message, but not the contents of the call or the message.RansomwareRansomware is a type of malicious software (or malware) that prevents device owners from accessing its data, typically by encrypting the persons files. Ransomware is usually deployed by cybercriminal gangs who demand a ransom payment usually cryptocurrency in return for providing the private key to decrypt the persons data.In some cases, ransomware gangs will steal the victims data before encrypting it, allowing the criminals to extort the victim further by threatening to publish the files online. Paying a ransomware gang is no guarantee that the victim will get their stolen data back, or that the gang will delete the stolen data.One of the first-ever ransomware attacks was documented in 1989, in which malware was distributed via floppy disk (an early form of removable storage) to attendees of the World Health Organizations AIDS conference. Since then, ransomware has evolved into a multi-billion dollar criminal industry as attackers refine their tactics and hone in on big-name corporate victims.(See also: Malware; Sanctions)Remote code executionRemote code execution refers to the ability to run commands or malicious code (such as malware) on a system from over a network, often the internet, without requiring any human interaction from the target. Remote code execution attacks can range in complexity but can be highly damaging when vulnerabilities are exploited.(See also: Arbitrary code execution)SanctionsCybersecurity-related sanctions work similarly to traditional sanctions in that they make it illegal for businesses or individuals to transact with a sanctioned entity. In the case of cyber sanctions, these entities are suspected of carrying out malicious cyber-enabled activities, such as ransomware attacks or the laundering of ransom payments made to hackers.The U.S. Treasurys Office of Foreign Assets Control (OFAC) administers sanctions. The Treasurys Cyber-Related Sanctions Program was established in 2015 as part of the Obama administrations response to cyberattacks targeting U.S. government agencies and private sector U.S. entities.While a relatively new addition to the U.S. governments bureaucratic armory against ransomware groups, sanctions are increasingly used to hamper and deter malicious state actors from conducting cyberattacks. Sanctions are often used against hackers who are out of reach of U.S. indictments or arrest warrants, such as ransomware crews based in Russia.Spyware (commercial, government)A broad term, like malware, that covers a range of surveillance monitoring software. Spyware is typically used to refer to malware made by private companies, such as NSO Groups Pegasus, Intellexas Predator, and Hacking Teams Remote Control System, among others, which the companies sell to government agencies. In more generic terms, these types of malware are like remote access tools, which allows their operators usually government agents to spy and monitor their targets, giving them the ability to access a devices camera and microphone or exfiltrate data. Spyware is also referred to as commercial or government spyware, or mercenary spyware.(See also: Stalkerware)StalkerwareStalkerware is a kind of surveillance malware (and a form of spyware) that is usually sold to ordinary consumers under the guise of child or employee monitoring software but is often used for the purposes of spying on the phones of unwitting individuals, oftentimes spouses and domestic partners. The spyware grants access to the targets messages, location, and more. Stalkerware typically requires physical access to a targets device, which gives the attacker the ability to install it directly on the targets device, often because the attacker knows the targets passcode.(See also: Spyware)Threat modelWhat are you trying to protect? Who are you worried about that could go after you or your data? How could these attackers get to the data? The answers to these kinds of questions are what will lead you to create a threat model. In other words, threat modeling is a process that an organization or an individual has to go through to design software that is secure, and devise techniques to secure it. A threat model can be focused and specific depending on the situation. A human rights activist in an authoritarian country has a different set of adversaries, and data, to protect than a large corporation in a democratic country that is worried about ransomware, for example.When we describe unauthorized access, were referring to the accessing of a computer system by breaking any of its security features, such as a login prompt or a password, which would be considered illegal under the U.S. Computer Fraud and Abuse Act, or the CFAA. The Supreme Court in 2021 clarified the CFAA, finding that accessing a system lacking any means of authorization for example, a database with no password is not illegal, as you cannot break a security feature that isnt there.Its worth noting that unauthorized is a broadly used term and often used by companies subjectively, and as such has been used to describe malicious hackers who steal someones password to break in through to incidents of insider access or abuse by employees.Virtual private network (VPN)A virtual private network, or VPN, is a networking technology that allows someone to virtually access a private network, such as their workplace or home, from anywhere else in the world. Many use a VPN provider to browse the web, thinking that this can help to avoid online surveillance.TechCrunch has a skeptics guide to VPNs that can help you decide if a VPN makes sense for you. If it does, well show you how to set up your own private and encrypted VPN server that only you control. And if it doesnt, we explore some of the privacy tools and other measures you can take tomeaningfully improve your privacy online.VulnerabilityA vulnerability (also referred to as a security flaw) is a type of bug that causes software to crash or behave in an unexpected way that affects the security of the system or its data. Sometimes, two or more vulnerabilities can be used in conjunction with each other known as vulnerability chaining to gain deeper access to a targeted system.(See also: Bug; Exploit)Zero-dayA zero-day is a specific type of security vulnerability that has been publicly disclosed or exploited but the vendor who makes the affected hardware or software has not been given time (or zero days) to fix the problem. As such, there may be no immediate fix or mitigation to prevent an affected system from being compromised. This can be particularly problematic for internet-connected devices.(See also: Vulnerability)First published on September 20, 2024. Last updated on December 23, 2024.0 Commentarii 0 Distribuiri 160 Views

TECHCRUNCH.COMThe TechCrunch Cyber GlossaryThe cybersecurity world is full of technical lingo and jargon. At TechCrunch, we have been writing about cybersecurity for years, and even we sometimes need a refresher on what exactly a specific word or expression means. Thats why we have created this glossary, which includes some of the most common and not so common words and expressions that we use in our articles, and explanations of how, and why, we use them.This is a developing compendium, and we will update it regularly.Advanced persistent threat (APT)An advanced persistent threat (APT) is often categorized as a hacker, or group of hackers, which gains and maintains unauthorized access to a targeted system. The main aim of an APT intruder is to remain undetected for long periods of time, often to conduct espionage and surveillance, to steal data, or sabotage critical systems.APTs are traditionally well-resourced hackers, including the funding to pay for their malicious campaigns, and access to hacking tools typically reserved by governments. As such, many of the long-running APT groups are associated with nation states, like China, Iran, North Korea, and Russia. In recent years, weve seen examples of non-nation state cybercriminal groups that are financially motivated (such as theft and money laundering) carrying out cyberattacks similar in terms of persistence and capabilities as some traditional government-backed APT groups.(See: Hacker)Arbitrary code executionThe ability to run commands or malicious code on an affected system, often because of a security vulnerability in the systems software. Arbitrary code execution can be achieved either remotely or with physical access to an affected system (such as someones device). In the cases where arbitrary code execution can be achieved over the internet, security researchers typically call this remote code execution.Often, code execution is used as a way to plant a back door for maintaining long-term and persistent access to that system, or for running malware that can be used to access deeper parts of the system or other devices on the same network.(See also: Remote code execution)Black/white hatHackers historically have been categorized as either black hat or white hat, usually depending on the motivations of the hacking activity carried out. A black hat hacker may be someone who might break the law and hack for money or personal gain, such as a cybercriminal. White hat hackers generally hack within legal bounds, like as part of a penetration test sanctioned by the target company, or to collect bug bounties finding flaws in various software and disclosing them to the affected vendor. For those who hack with less clearcut motivations, they may be regarded as a gray hat. Famously, the hacking group the L0pht used the term gray hat in an interview with The New York Times Magazine in 1999. While still commonly used in modern security parlance, many have moved away from the hat terminology.(Also see: Hacker, Hacktivist)BotnetBotnets are networks of hijacked internet-connected devices, such as webcams and home routers, that have been compromised by malware (or sometimes weak or default passwords) for the purposes of being used in cyberattacks. Botnets can be made up of hundreds or thousands of devices and are typically controlled by a command-and-control server that sends out commands to ensnared devices. Botnets can be used for a range of malicious reasons, like using the distributed network of devices to mask and shield the internet traffic of cybercriminals, deliver malware, or harness their collective bandwidth to maliciously crash websites and online services with huge amounts of junk internet traffic.(See also: Command-and-control server; Distributed denial-of-service)BugA bug is essentially the cause of a software glitch, such as an error or a problem that causes the software to crash or behave in an unexpected way. In some cases, a bug can also be a security vulnerability.The term bug originated in 1947, at a time when early computers were the size of rooms and made up of heavy mechanical and moving equipment. The first known incident of a bug found in a computer was when a moth disrupted the electronics of one of these room-sized computers.(See also: Vulnerability)Command-and-control (C2) serverCommand-and-control servers (also known as C2 servers) are used by cybercriminals to remotely manage and control their fleets of compromised devices and launch cyberattacks, such as delivering malware over the internet and launching distributed denial-of-service attacks.(See also: Botnet; Distributed denial-of-service)CryptojackingCryptojacking is when a devices computational power is used, with or without the owners permission, to generate cryptocurrency. Developers sometimes bundle code in apps and on websites, which then uses the devices processors to complete complex mathematical calculations needed to create new cryptocurrency. The generated cryptocurrency is then deposited in virtual wallets owned by the developer.Some malicious hackers use malware to deliberately compromise large numbers of unwitting computers to generate cryptocurrency on a large and distributed scale.Data breachWhen we talk about data breaches, we ultimately mean the improper removal of data from where it should have been. But the circumstances matter and can alter the terminology we use to describe a particular incident.A data breach is when protected data was confirmed to have improperly left a system from where it was originally stored and usually confirmed when someone discovers the compromised data. More often than not, were referring to the exfiltration of data by a malicious cyberattacker or otherwise detected as a result of an inadvertent exposure. Depending on what is known about the incident, we may describe it in more specific terms where details are known.(See also: Data exposure; Data leak)Data exposureA data exposure (a type of data breach) is when protected data is stored on a system that has no access controls, such as because of human error or a misconfiguration. This might include cases where a system or database is connected to the internet but without a password. Just because data was exposed doesnt mean the data was actively discovered, but nevertheless could still be considered a data breach.Data leakA data leak (a type of data breach) is where protected data is stored on a system in a way that it was allowed to escape, such as due to a previously unknown vulnerability in the system or by way of insider access (such as an employee). A data leak can mean that data could have been exfiltrated or otherwise collected, but there may not always be the technical means, such as logs, to know for sure.Distributed denial-of-service (DDoS)A distributed denial-of-service, or DDoS, is a kind of cyberattack that involves flooding targets on the internet with junk web traffic in order to overload and crash the servers and cause the service, such as a website, online store, or gaming platform to go down.DDoS attacks are launched by botnets, which are made up of networks of hacked internet-connected devices (such as home routers and webcams) that can be remotely controlled by a malicious operator, usually from a command-and-control server. Botnets can be made up of hundreds or thousands of hijacked devices.While a DDoS is a form of cyberattack, these data-flooding attacks are not hacks in themselves, as they dont involve the breach and exfiltration of data from their targets, but instead cause a denial of service event to the affected service.(See also: Botnet; Command-and-control server)EncryptionEncryption is the way and means in which information, such as files, documents, and private messages, are scrambled to make the data unreadable to anyone other than to its intended owner or recipient. Encrypted data is typically scrambled using an encryption algorithm essentially a set of mathematical formulas that determines howNearly all modern encryption algorithms in use today are open source, allowing anyone (including security professionals and cryptographers) to review and check the algorithm to make sure its free of faults or flaws. Some encryption algorithms are stronger than others, meaning data protected by some weaker algorithms can be decrypted by harnessing large amounts of computational power.Encryption is different from encoding, which simply converts data into a different and standardized format, usually for the benefit of allowing computers to read the data.(See also: End-to-end encryption)End-to-end encryption (E2EE)End-to-end encryption (or E2EE) is a security feature built into many messaging and file-sharing apps, and is widely considered one of the strongest ways of securing digital communications as they traverse the internet.E2EE scrambles the file or message on the senders device before its sent in a way that allows only the intended recipient to decrypt its contents, making it near-impossible for anyone including a malicious hacker, or even the app maker to snoop inside on someones private communications. In recent years, E2EE has become the default security standard for many messaging apps, including Apples iMessage, Facebook Messenger, Signal, and WhatsApp.E2EE has also become the subject of governmental frustration in recent years, as encryption makes it impossible for tech companies or app providers to give over information that they themselves do not have access to.(See also: Encryption)Escalation of privilegesMost modern systems are protected with multiple layers of security, including the ability to set user accounts with more restricted access to the underlying systems configurations and settings. This prevents these users or anyone with improper access to one of these user accounts from tampering with the core underlying system. However, an escalation of privileges event can involve exploiting a bug or tricking the system into granting the user more access rights than they should have.Malware can also take advantage of bugs or flaws caused by escalation of privileges by gaining deeper access to a device or a connected network, potentially allowing the malware to spread.ExploitAn exploit is the way and means in which a vulnerability is abused or taken advantage of, usually in order to break into a system.(See also: Bug; Vulnerability)ExtortionIn general terms, extortion is the act of obtaining something, usually money, through the use of force and intimidation. Cyber extortion is no different, as it typically refers to a category of cybercrime whereby attackers demand payment from victims by threatening to damage, disrupt, or expose their sensitive information.Extortion is often used in ransomware attacks, where hackers typically exfiltrate company data before demanding a ransom payment from the hacked victim. But extortion has quickly become its own category of cybercrime, with many, often younger, financially motivated hackers, opting to carry out extortion-only attacks, which snub the use of encryption in favor of simple data theft.(Also see: Ransomware)ForensicsForensic investigations involve analyzing data and information contained in a computer, server, or mobile device, looking for evidence of a hack, crime, or some sort of malfeasance. Sometimes, in order to access the data, corporate or law enforcement investigators rely on specialized devices and tools, like those made by Cellebrite and Grayshift, which are designed to unlock and break the security of computers and cellphones to access the data within.HackerThere is no one single definition of hacker. The term has its own rich history, culture, and meaning within the security community. Some incorrectly conflate hackers, or hacking, with wrongdoing.By our definition and use, we broadly refer to a hacker as someone who is a breaker of things, usually by altering how something works to make it perform differently in order to meet their objectives. In practice, that can be something as simple as repairing a machine with non-official parts to make it function differently as intended, or work even better.In the cybersecurity sense, a hacker is typically someone who breaks a system or breaks the security of a system. That could be anything from an internet-connected computer system to a simple door lock. But the persons intentions and motivations (if known) matter in our reporting, and guides how we accurately describe the person, or their activity.There are ethical and legal differences between a hacker who works as a security researcher, who is professionally tasked with breaking into a companys systems with their permission to identify security weaknesses that can be fixed before a malicious individual has a chance to exploit them; and a malicious hacker who gains unauthorized access to a system and steals data without obtaining anyones permission.Because the term hacker is inherently neutral, we generally apply descriptors in our reporting to provide context about who were talking about. If we know that an individual works for a government and is contracted to maliciously steal data from a rival government, were likely to describe them as a nation-state or government hackeradvanced persistent threat), for example. If a gang is known to use malware to steal funds from individuals bank accounts, we may describe them as financially motivated hackers, or if there is evidence of criminality or illegality (such as an indictment), we may describe them simply as cybercriminals.And, if we dont know motivations or intentions, or a person describes themselves as such, we may simply refer to a subject neutrally as a hacker, where appropriate.Hack-and-leak operationSometimes, hacking and stealing data is only the first step. In some cases, hackers then leak the stolen data to journalists, or directly post the data online for anyone to see. The goal can be either to embarrass the hacking victim, or to expose alleged malfeasance.The origins of modern hack-and-leak operations date back to the early- and mid-2000s, when groups like el8, pHC (Phrack High Council) and zf0 were targeting people in the cybersecurity industry who, according to these groups, had foregone the hacker ethos and had sold out. Later, there are the examples of hackers associated with Anonymous and leaking data from U.S. government contractor HBGary, and North Korean hackers leaking emails stolen from Sony as retribution for the Hollywood comedy, The Interview.Some of the most recent and famous examples are the hack against the now-defunct government spyware pioneer Hacking Team in 2015, and the infamous Russian government-led hack-and-leak of Democratic National Committee emails ahead of the 2016 U.S. presidential elections. Iranian government hackers tried to emulate the 2016 playbook during the 2024 elections.HacktivistA particular kind of hacker who hacks for what they and perhaps the public perceive as a good cause, hence the portmanteau of the words hacker and activist. Hacktivism has been around for more than two decades, starting perhaps with groups like the Cult of the Dead Cow in the late 1990s. Since then, there have been several high profile examples of hacktivist hackers and groups, such as Anonymous, LulzSec, and Phineas Fisher.(Also see: Hacker)InfosecShort for information security, an alternative term used to describe defensive cybersecurity focused on the protection of data and information. Infosec may be the preferred term for industry veterans, while the term cybersecurity has become widely accepted. In modern times, the two terms have become largely interchangeable.InfostealersInfostealers are malware capable of stealing information from a persons computer or device. Infostealers are often bundled in pirated software, like Redline, which when installed will primarily seek out passwords and other credentials stored in the persons browser or password manager, then surreptitiously upload the victims passwords to the attackers systems. This lets the attacker sign in using those stolen passwords. Some infostealers are also capable of stealing session tokens from a users browser, which allow the attacker to sign in to a persons online account as if they were that user but without needing their password or multifactor authentication code.(See also: Malware)JailbreakJailbreaking is used in several contexts to mean the use of exploits and other hacking techniques to circumvent the security of a device, or removing the restrictions a manufacturer puts on hardware or software. In the context of iPhones, for example, a jailbreak is a technique to remove Apples restrictions on installing apps outside of its walled garden or to gain the ability to conduct security research on Apple devices, which is normally highly restricted. In the context of AI, jailbreaking means figuring out a way to get a chatbot to give out information that its not supposed to.KernelThe kernel, as its name suggests, is the core part of an operating system that connects and controls virtually all hardware and software. As such, the kernel has the highest level of privileges, meaning it has access to virtually any data on the device. Thats why, for example, apps such as antivirus and anti-cheat software run at the kernel level, as they require broad access to the device. Having kernel access allows these apps to monitor for malicious code.MalwareMalware is a broad umbrella term that describes malicious software. Malware can land in many forms and be used to exploit systems in different ways. As such, malware that is used for specific purposes can often be referred to as its own subcategory. For example, the type of malware used for conducting surveillance on peoples devices is also called spyware, while malware that encrypts files and demands money from its victims is called ransomware.(See also: Infostealers; Ransomware; Spyware)Metadata is information about something digital, rather than its contents. That can include details about the size of a file or document, who created it, and when, or in the case of digital photos, where the image was taken and information about the device that took the photo. Metadata may not identify the contents of a file, but it can be useful in determining where a document came from or who authored it. Metadata can also refer to information about an exchange, such as who made a call or sent a text message, but not the contents of the call or the message.RansomwareRansomware is a type of malicious software (or malware) that prevents device owners from accessing its data, typically by encrypting the persons files. Ransomware is usually deployed by cybercriminal gangs who demand a ransom payment usually cryptocurrency in return for providing the private key to decrypt the persons data.In some cases, ransomware gangs will steal the victims data before encrypting it, allowing the criminals to extort the victim further by threatening to publish the files online. Paying a ransomware gang is no guarantee that the victim will get their stolen data back, or that the gang will delete the stolen data.One of the first-ever ransomware attacks was documented in 1989, in which malware was distributed via floppy disk (an early form of removable storage) to attendees of the World Health Organizations AIDS conference. Since then, ransomware has evolved into a multi-billion dollar criminal industry as attackers refine their tactics and hone in on big-name corporate victims.(See also: Malware; Sanctions)Remote code executionRemote code execution refers to the ability to run commands or malicious code (such as malware) on a system from over a network, often the internet, without requiring any human interaction from the target. Remote code execution attacks can range in complexity but can be highly damaging when vulnerabilities are exploited.(See also: Arbitrary code execution)SanctionsCybersecurity-related sanctions work similarly to traditional sanctions in that they make it illegal for businesses or individuals to transact with a sanctioned entity. In the case of cyber sanctions, these entities are suspected of carrying out malicious cyber-enabled activities, such as ransomware attacks or the laundering of ransom payments made to hackers.The U.S. Treasurys Office of Foreign Assets Control (OFAC) administers sanctions. The Treasurys Cyber-Related Sanctions Program was established in 2015 as part of the Obama administrations response to cyberattacks targeting U.S. government agencies and private sector U.S. entities.While a relatively new addition to the U.S. governments bureaucratic armory against ransomware groups, sanctions are increasingly used to hamper and deter malicious state actors from conducting cyberattacks. Sanctions are often used against hackers who are out of reach of U.S. indictments or arrest warrants, such as ransomware crews based in Russia.Spyware (commercial, government)A broad term, like malware, that covers a range of surveillance monitoring software. Spyware is typically used to refer to malware made by private companies, such as NSO Groups Pegasus, Intellexas Predator, and Hacking Teams Remote Control System, among others, which the companies sell to government agencies. In more generic terms, these types of malware are like remote access tools, which allows their operators usually government agents to spy and monitor their targets, giving them the ability to access a devices camera and microphone or exfiltrate data. Spyware is also referred to as commercial or government spyware, or mercenary spyware.(See also: Stalkerware)StalkerwareStalkerware is a kind of surveillance malware (and a form of spyware) that is usually sold to ordinary consumers under the guise of child or employee monitoring software but is often used for the purposes of spying on the phones of unwitting individuals, oftentimes spouses and domestic partners. The spyware grants access to the targets messages, location, and more. Stalkerware typically requires physical access to a targets device, which gives the attacker the ability to install it directly on the targets device, often because the attacker knows the targets passcode.(See also: Spyware)Threat modelWhat are you trying to protect? Who are you worried about that could go after you or your data? How could these attackers get to the data? The answers to these kinds of questions are what will lead you to create a threat model. In other words, threat modeling is a process that an organization or an individual has to go through to design software that is secure, and devise techniques to secure it. A threat model can be focused and specific depending on the situation. A human rights activist in an authoritarian country has a different set of adversaries, and data, to protect than a large corporation in a democratic country that is worried about ransomware, for example.When we describe unauthorized access, were referring to the accessing of a computer system by breaking any of its security features, such as a login prompt or a password, which would be considered illegal under the U.S. Computer Fraud and Abuse Act, or the CFAA. The Supreme Court in 2021 clarified the CFAA, finding that accessing a system lacking any means of authorization for example, a database with no password is not illegal, as you cannot break a security feature that isnt there.Its worth noting that unauthorized is a broadly used term and often used by companies subjectively, and as such has been used to describe malicious hackers who steal someones password to break in through to incidents of insider access or abuse by employees.Virtual private network (VPN)A virtual private network, or VPN, is a networking technology that allows someone to virtually access a private network, such as their workplace or home, from anywhere else in the world. Many use a VPN provider to browse the web, thinking that this can help to avoid online surveillance.TechCrunch has a skeptics guide to VPNs that can help you decide if a VPN makes sense for you. If it does, well show you how to set up your own private and encrypted VPN server that only you control. And if it doesnt, we explore some of the privacy tools and other measures you can take tomeaningfully improve your privacy online.VulnerabilityA vulnerability (also referred to as a security flaw) is a type of bug that causes software to crash or behave in an unexpected way that affects the security of the system or its data. Sometimes, two or more vulnerabilities can be used in conjunction with each other known as vulnerability chaining to gain deeper access to a targeted system.(See also: Bug; Exploit)Zero-dayA zero-day is a specific type of security vulnerability that has been publicly disclosed or exploited but the vendor who makes the affected hardware or software has not been given time (or zero days) to fix the problem. As such, there may be no immediate fix or mitigation to prevent an affected system from being compromised. This can be particularly problematic for internet-connected devices.(See also: Vulnerability)First published on September 20, 2024. Last updated on December 23, 2024.0 Commentarii 0 Distribuiri 160 Views -

TECHCRUNCH.COMProsus buys Despegar for $1.7B, taking a bite out of Latin Americas travel sectorYet another major investment is going down in the travel sector, underscoring its ongoing rebound after the Covid-19 pandemic. Prosus, the tech conglomerate controlled by Naspers, is paying $1.7 billion to acquire Despegar, one of the biggest online travel agencies in Latin America, to scale up its operations in the region.Despegars board of directors has approved the deal, which will now go to a shareholder vote, Prosus said in a statement today. It expects the deal to close in Q2 of 2025.With GDP across LatAm expected to grow 2%-3% next year, Prosus wants to use Despegar to lean into greater economies of scale in the region. It already owns food delivery platform iFood and Sympla, Latin Americas answer to Ticketmaster, and together it will have 100 million customers across all three properties after the deal closes.This acquisition is a clear demonstration of our strategy to build value by creating a high-quality ecosystem of complementary businesses, said Fabricio Bloisi, CEO of Prosus Group, in a statement. Despegar is a highly profitable company, with an attractive market position, and an experienced management team making it a natural addition to our presence in Latin America. We will accelerate Despegars growth by leveraging the extensive customer touchpoints within our portfolio.This is a decent outcome for Despegar, which has struggled to grow in the last decade of economic, social and public health turmoil in the region.The company, based out of Argentina, is publicly traded on the NYSE and had market cap of $1.24 billion as of last Friday, at market close. This deal which specifically will see Prosus pay $19.50 per share is a 33% premium on that price, but it is also, notably, still less than the market cap that Despegar had on its first day of public trading in 2017.On Despegars side, it could give the company a boost of investment in the near term. For our customers, this means access to an expanded portfolio of services, better experiences, greater loyalty benefits and more complete solutions tailored to their needs, said Damin Scokin, CEO of Despegar, in a statement. The deal is one of the latest of a spate of investments in travel and tourism technology at the moment. Most recently, last week, Hostaway which builds software for the private short-term rental market raised $365 million led by General Atlantic. General Atlantic, as it happens, was once an investor in Despegar when it was still a private company. Other backers over the years included Accel, Tiger Global, Sequoia, hotel giant Accor, TPG and even Yahoo (parent company of TechCrunch).Despegar is one of the older and bigger online travel brands in the market, having been around in one form or another (it also controls another major Latin American travel brand, Decolar in Brazil) since 1999, during the first dot-com boom.These days, it is active in some 19 different markets in the region, operating both a direct-to-consumer service as well as a white-label offering used by banks, airlines and other retailers selling travel services to their customers.And yes, its worked to keep up with the times, and it has built an conversational chatbot called Sofia. Competing against the likes of Hotel Urbano, Despegar says that it sees some 9.5 million transactions annually, working out to $5.3 billion in gross bookings, $706 million in revenue, and EBITDA of $116 million (based on its full-year 2023 results).0 Commentarii 0 Distribuiri 158 Views

TECHCRUNCH.COMProsus buys Despegar for $1.7B, taking a bite out of Latin Americas travel sectorYet another major investment is going down in the travel sector, underscoring its ongoing rebound after the Covid-19 pandemic. Prosus, the tech conglomerate controlled by Naspers, is paying $1.7 billion to acquire Despegar, one of the biggest online travel agencies in Latin America, to scale up its operations in the region.Despegars board of directors has approved the deal, which will now go to a shareholder vote, Prosus said in a statement today. It expects the deal to close in Q2 of 2025.With GDP across LatAm expected to grow 2%-3% next year, Prosus wants to use Despegar to lean into greater economies of scale in the region. It already owns food delivery platform iFood and Sympla, Latin Americas answer to Ticketmaster, and together it will have 100 million customers across all three properties after the deal closes.This acquisition is a clear demonstration of our strategy to build value by creating a high-quality ecosystem of complementary businesses, said Fabricio Bloisi, CEO of Prosus Group, in a statement. Despegar is a highly profitable company, with an attractive market position, and an experienced management team making it a natural addition to our presence in Latin America. We will accelerate Despegars growth by leveraging the extensive customer touchpoints within our portfolio.This is a decent outcome for Despegar, which has struggled to grow in the last decade of economic, social and public health turmoil in the region.The company, based out of Argentina, is publicly traded on the NYSE and had market cap of $1.24 billion as of last Friday, at market close. This deal which specifically will see Prosus pay $19.50 per share is a 33% premium on that price, but it is also, notably, still less than the market cap that Despegar had on its first day of public trading in 2017.On Despegars side, it could give the company a boost of investment in the near term. For our customers, this means access to an expanded portfolio of services, better experiences, greater loyalty benefits and more complete solutions tailored to their needs, said Damin Scokin, CEO of Despegar, in a statement. The deal is one of the latest of a spate of investments in travel and tourism technology at the moment. Most recently, last week, Hostaway which builds software for the private short-term rental market raised $365 million led by General Atlantic. General Atlantic, as it happens, was once an investor in Despegar when it was still a private company. Other backers over the years included Accel, Tiger Global, Sequoia, hotel giant Accor, TPG and even Yahoo (parent company of TechCrunch).Despegar is one of the older and bigger online travel brands in the market, having been around in one form or another (it also controls another major Latin American travel brand, Decolar in Brazil) since 1999, during the first dot-com boom.These days, it is active in some 19 different markets in the region, operating both a direct-to-consumer service as well as a white-label offering used by banks, airlines and other retailers selling travel services to their customers.And yes, its worked to keep up with the times, and it has built an conversational chatbot called Sofia. Competing against the likes of Hotel Urbano, Despegar says that it sees some 9.5 million transactions annually, working out to $5.3 billion in gross bookings, $706 million in revenue, and EBITDA of $116 million (based on its full-year 2023 results).0 Commentarii 0 Distribuiri 158 Views -

WWW.ARTOFVFX.COMWarfareExperience the intensity of Warfare, the gripping war film that brings together Alex Garland and Ray Mendoza in their highly anticipated collaboration after Civil War!The VFX are made by:Cinesite (VFX Supervisor: Simon Stanley-Clamp)Directors: Alex Garland, Ray MendozaRelease Date: 2025 Vincent Frei The Art of VFX 2024The post Warfare appeared first on The Art of VFX.0 Commentarii 0 Distribuiri 184 Views

WWW.ARTOFVFX.COMWarfareExperience the intensity of Warfare, the gripping war film that brings together Alex Garland and Ray Mendoza in their highly anticipated collaboration after Civil War!The VFX are made by:Cinesite (VFX Supervisor: Simon Stanley-Clamp)Directors: Alex Garland, Ray MendozaRelease Date: 2025 Vincent Frei The Art of VFX 2024The post Warfare appeared first on The Art of VFX.0 Commentarii 0 Distribuiri 184 Views -