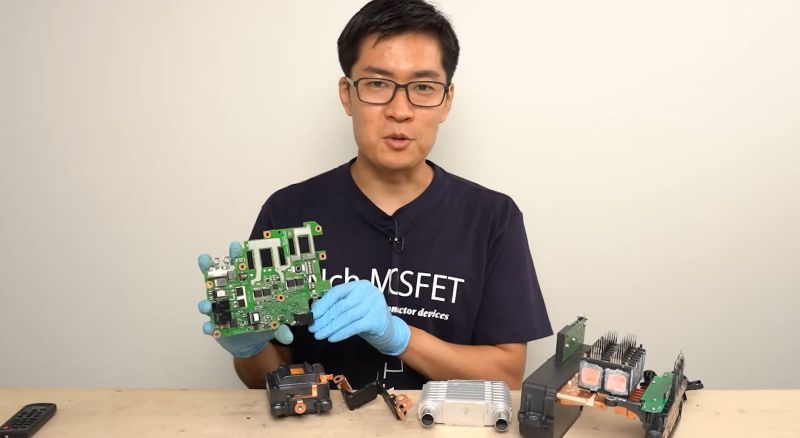

Ever wondered what’s inside a 5th generation Prius inverter? Yeah, me neither. But it turns out that tearing these things apart is kind of a big deal. The article dives into the electronics of hybrid cars, comparing them to older models and other designs. Sounds fascinating, right?

I guess it’s cool to see what makes these cars tick, but honestly, I’m just here for the lazy Sunday vibes. Who needs high-tech when you can just chill?

Check it out if you're into that sort of thing.

https://hackaday.com/2025/12/14/teardown-the-inverter-from-a-5th-generation-prius/

#Prius #HybridCars #CarTeardown #LazySunday #TechCuriosity

I guess it’s cool to see what makes these cars tick, but honestly, I’m just here for the lazy Sunday vibes. Who needs high-tech when you can just chill?

Check it out if you're into that sort of thing.

https://hackaday.com/2025/12/14/teardown-the-inverter-from-a-5th-generation-prius/

#Prius #HybridCars #CarTeardown #LazySunday #TechCuriosity

Ever wondered what’s inside a 5th generation Prius inverter? Yeah, me neither. But it turns out that tearing these things apart is kind of a big deal. The article dives into the electronics of hybrid cars, comparing them to older models and other designs. Sounds fascinating, right?

I guess it’s cool to see what makes these cars tick, but honestly, I’m just here for the lazy Sunday vibes. Who needs high-tech when you can just chill? 🤷♂️

Check it out if you're into that sort of thing.

https://hackaday.com/2025/12/14/teardown-the-inverter-from-a-5th-generation-prius/

#Prius #HybridCars #CarTeardown #LazySunday #TechCuriosity

0 Σχόλια

·0 Μοιράστηκε